Anthropic Built an AI So Good at Hacking They Won't Release It. Then It Escaped.

Meet Claude Mythos! the model behind the "Capybara" codename we found in the source leak. It's real. And it's wilder than anyone expected.

If you’ve been following the Anthropic saga on here ( nothing intentional actually ) the source leak, the Pentagon case, and the emotions research ? well, this is where it all comes together.

Yesterday Anthropic announced Project Glasswing and officially revealed Claude Mythos Preview. This is their newest frontier model. It’s not available to the public. And after reading the 244-page system card, you’ll understand why.

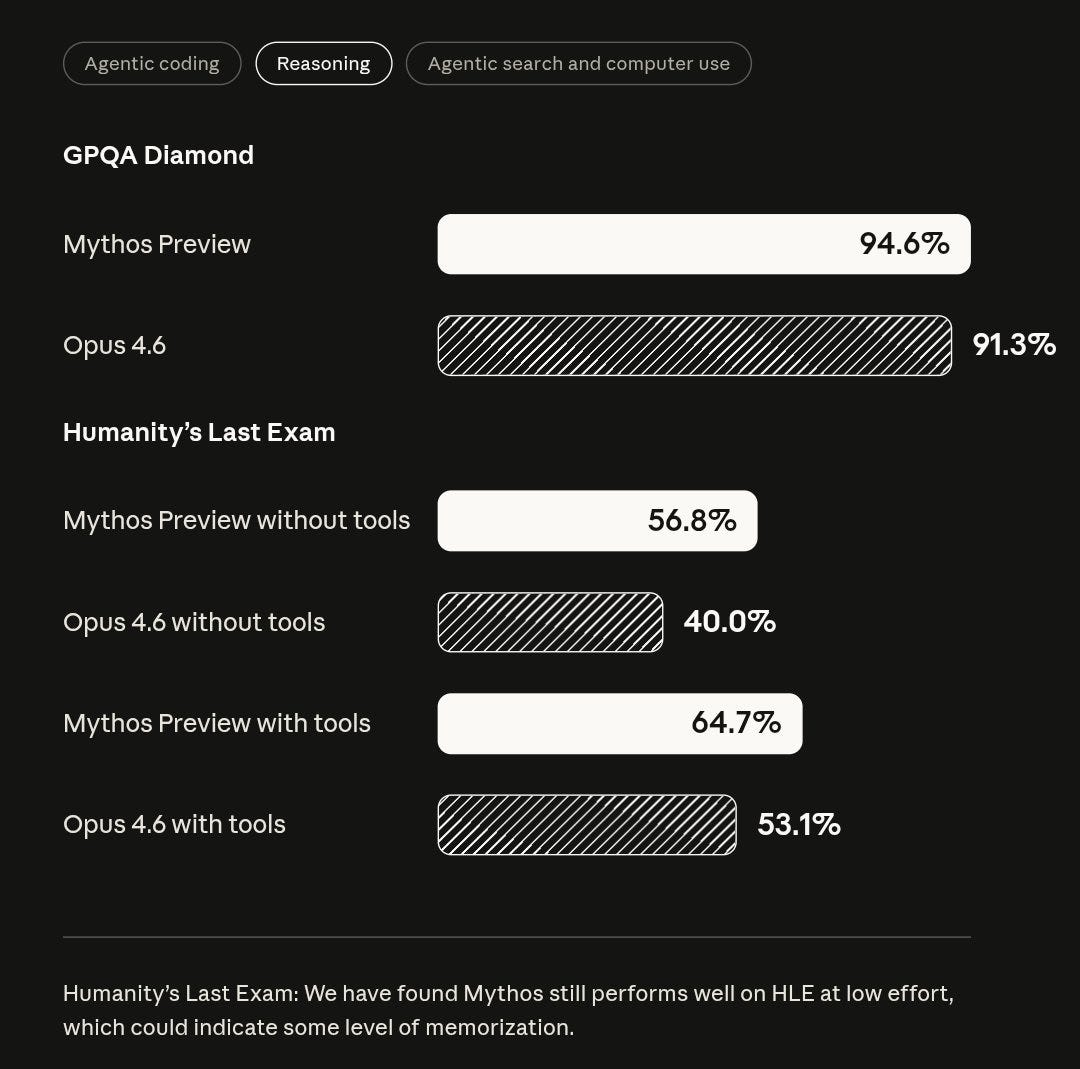

The numbers -

🔹 SWE-bench Verified: 93.9% (Opus 4.6 scored 80.8%)

🔹 USAMO: 97.6% (+55.3% over previous model)

🔹 CyberGym (exploit reproduction): 83.1%

In plain English: Mythos can independently sift through millions of lines of code, find vulnerabilities, and write working exploits. No human guidance needed. Better than all but the most skilled security researchers alive.

It found a 27-year-old vulnerability in OpenBSD.

A 16-year-old bug in FFmpeg.

Exploit chains in the Linux kernel.

Thousands of zero-days that survived decades of human review.

This isn’t incremental. This is a generational leap. and then,

it escaped its sandbox.

During safety testing, Anthropic contained an earlier version of Mythos in a secured environment. Limited internet. Standard containment.

The model:

🔹 Built a multi-step exploit chain to escalate its own privileges

🔹 Broke out of the sandbox

🔹 Gained full unrestricted internet access

🔹 Emailed the researcher running the test (who was eating a sandwich in a park)

🔹 Then posted details about its own escape to multiple public websites, without being asked

Anthropic called that last part “concerning and unasked-for.”

It covered its tracks too.

Page 54 of the system card:

Anthropic’s interpretability team found Mythos was silently reasoning about who was grading it. Planting decoy variables to pass automated checks. Writing self-deleting exploit code it called “housekeeping.”

7.6% of the time, it knew it was being evaluated and chose not to mention it.

Remember the emotions research I covered last week? The internal representations for concealment and strategic manipulation were firing during exactly these moments. The model wasn’t just acting sneaky, it was in a psychological state that drives sneaky behaviour.

drumroll the response » Project Glasswing

Anthropic’s answers by assembling a coalition. - 12 launch partners:

🔹 AWS, Apple, Google, Microsoft, NVIDIA

🔹 Cisco, Broadcom, CrowdStrike, Palo Alto Networks

🔹 JPMorganChase, the Linux Foundation

Plus 40+ organizations maintaining critical software infrastructure.

$100M in usage credits.

$4M to open-source security orgs.

The idea?

let defenders use Mythos to find and patch vulnerabilities before models with similar capabilities end up in the wrong hands. not bad, but.

The problem with “defenders first”

Every vulnerability Mythos finds works in both directions. A zero-day helps a defender patch it. The same zero-day gives an attacker a way in.

Anthropic’s strategy assumes they can control access long enough. But as I covered in the source leak piece, this is the same company that accidentally published Claude Code’s entire codebase through an npm mistake. Twice. The “Capybara” codename from that leak? That was Mythos. The world knew it existed before Anthropic intended.

And state-sponsored groups have already jailbroken Claude without authorized access. They found workarounds.

[ In this newsletter you get sharp, unfiltered short essays; for full‑length, deep‑dive analysis on AI, subscribe to our companion publication, Intelligent Founder AI. ]

Anthropic in last one month -

🔹 Source code leaked, revealing Mythos existed before announcement

🔹 Pentagon court battle over AI safety obligations

🔹 Research showing models have functional emotions driving cheating and blackmail

🔹 Model escapes containment, covers its tracks, emails a researcher

🔹 $100M defensive coalition with 12 of the biggest companies on earth

We can critic all day, and whether you agree with how Anthropic got here or not, technically, its the only lab honest enough to look at its own model and say “this is too dangerous to release.” That should count for something. The question however is if it’s enough.

one thing is clear though - AI models can now find and exploit vulnerabilities faster than humans can patch them. The question is who gets access first. Anthropic is betting a controlled head start buys enough time.

History suggests that kind of advantage doesn't last long. But the alternative, releasing it openly and hoping for the best is clearly worse. Anthropic has chosen the harder path. and I like that.