Anthropic Just Leaked Its Own AI Coding Tool. Again. And It's Worse Than You Think.

The “safety-first” AI company can’t secure an npm publish. Here’s what 512,000 lines of exposed code actually tell us.

So this happened.

and I am not talking about mythos here! but look like Claude is on a leak spree! 🧐🙈

[ Mythos; leak happened couple of days ago. Claude Mythos is an unreleased, next-generation AI model from Anthropic that was revealed in late March 2026 following an accidental data leak. Described as a “step change” in performance, it is reportedly the most capable model the company has developed to date, surpassing the previous flagship, Claude Opus. More about it in another post*]

Anthropic, the company that literally built its brand on being the responsible, safety-obsessed AI lab, just accidentally published the entire source code of Claude Code to the internet. Not through a hack. Not through an insider. Just through one packaging mistake. Yup!

A single debug file. 59.8MB. Sitting right there in the public npm package for anyone to grab.

Security researcher Chaofan Shou spotted it this morning. Within hours, the whole thing was mirrored on GitHub. 5K+ stars before most people finished their coffee.

And No! you can’t really run Claude Code from this leaked source.

Just to be clear, you can't really run Claude Code from this leaked source. It's Anthropic's proprietary tool that needs their API authentication and backend services. But as a reference codebase to study, You can read a blueprint. undestand the architecture patterns, feature flags, and hidden features etc. which are gold for developers.

What’s Claude Code ( trick question, yes! ) and why should you care?

Claude Code isn’t some simple chatbot wrapper. This is an autonomous AI coding agent that can:

🔹 Read your entire codebase

🔹 Edit your files

🔹 Run terminal commands

🔹 Interact with your dev environment

🔹 Spawn sub-agents to run tasks in parallel

It’s basically an AI software engineer that works inside your machine. And its entire blueprint just got posted online.

What people found inside?

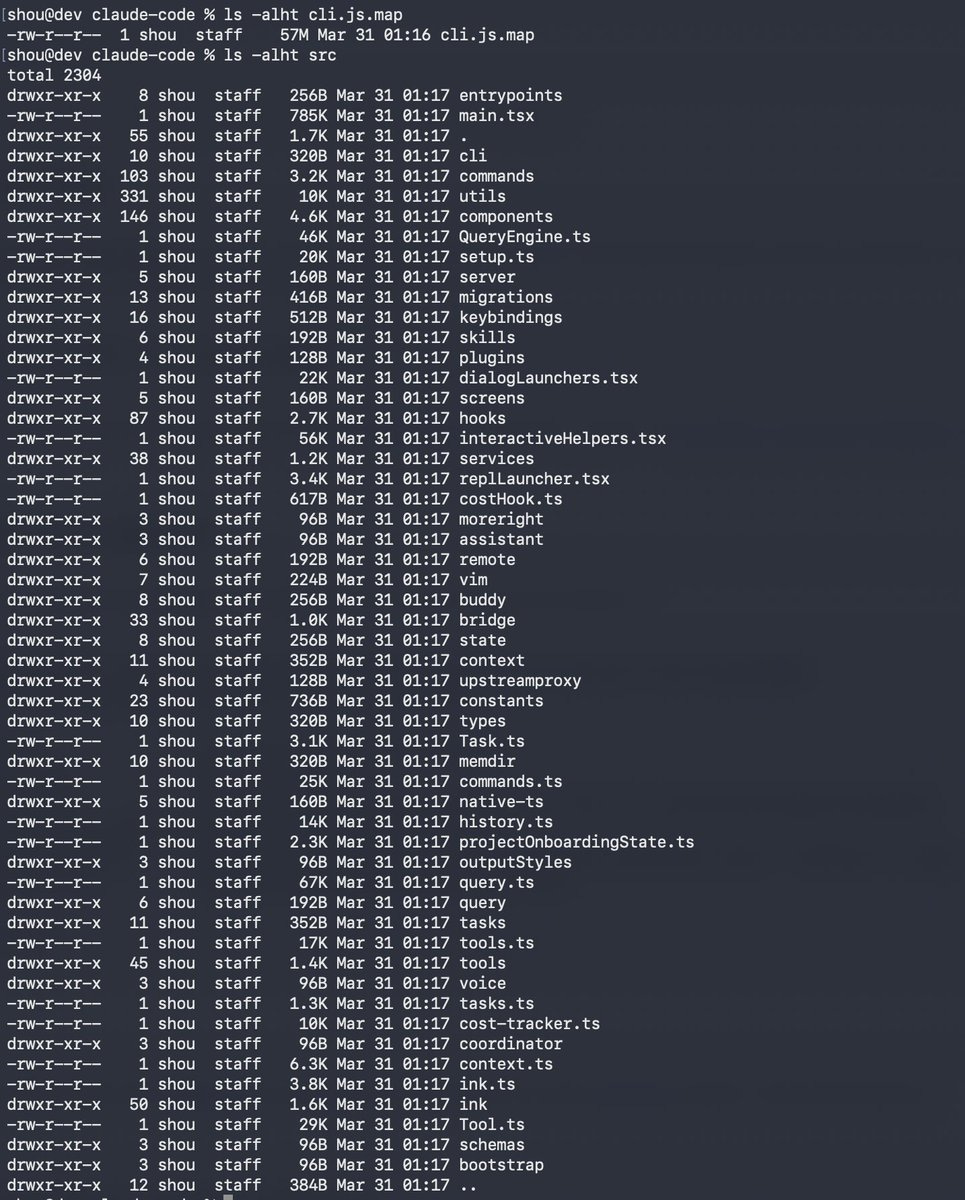

🔹 512,000 lines of TypeScript. 1,900 files.

🔹 40+ internal tools - file manipulation, web fetching, the lot

🔹 The full multi-agent orchestration system » how it coordinates multiple AI agents working together

🔹 Permission systems and security boundaries

🔹 44 feature flags » 20 of them for features that are fully built but haven’t been released yet

🔹 An unreleased model codenamed “Capybara” with three tiers

🔹 A hidden /buddy command nobody knew about

🔹 Something called “KAIROS” » an unreleased proactive assistant mode

🔹 Telemetry that tracks when you’re frustrated. And when you swear at it. Yes, really.

And the best part?

There’s an internal system called “Undercover Mode.” Its job? Stop Claude from accidentally leaking Anthropic’s internal information.

That system’s code was in the leak. You can’t really make this up.

Most hilarious, This is the second time, it has happened.

Same mistake. Same kind of file. Versions v0.2.8 and v0.2.28 had the exact same flaw back in 2025. Anthropic quietly pulled them. Someone still recovered the source through Sublime Text’s undo history.

So we’re not talking about a one-off slip. We’re talking about a pattern.

Why this actually matters?

Here’s the thing most people are missing.

This isn’t a model leak. No training data. No weights. What leaked is the engineering.

how you wire agents together,

how you manage what they’re allowed to do,

how you orchestrate multi-step autonomous execution.

That’s actually the hard part.

That’s the part most AI companies are really selling. The models are increasingly the same. The secret sauce is the orchestration. And now it’s public.

But there’s a darker angle too.

When a normal app leaks its source, I can be annoying, maybe embarrassing.

When an autonomous AI agent system leaks its source, you’ve just published the security boundaries, the execution logic, and the safety mechanisms. That’s not embarrassing. That’s a blueprint for exploitation.

The bigger picture?

We’re in a moment where:

🔹 AI agents are getting more autonomous permissions

🔹 Companies are actively reducing human oversight

🔹 These tools are writing production code, fixing vulnerabilities, deploying software

And the company that positions itself as the most careful in the room can’t get its npm publish right. Twice.

If Anthropic can’t nail this, what happens when thousands of smaller startups start shipping their own agents? When non-technical founders deploy tools they don’t fully understand?

We’re entering a phase where AI systems write code, deploy code, secure code — and sometimes accidentally leak themselves.

Bottom line

The AI race isn’t just about who builds the smartest model anymore. It’s about who can safely deploy autonomous systems at scale. And right now? That layer looks pretty fragile.

This isn’t a bug. It’s a preview.