Gemini 3.1 Pro Just Dropped. Here’s What the Numbers (and Users) Actually Say.

Google just dropped Gemini 3.1 Pro and the headline is simple:

it got a lot smarter at reasoning.

The model doubled its score on ARC-AGI-2, the test that throws completely novel logic puzzles at AI to see if it can actually think, not just pattern-match. That’s the biggest single-release jump any lab has pulled off. It’s also crushing agentic benchmarks, meaning it’s better at chaining together multi-step tasks like a junior analyst would ie browsing, using tools, making decisions across long workflows.

If you’re building AI agents or automation pipelines, this is the one to test right now.

The catch but? Users are already flagging that it feels different.

The creative writing and personality got flattened, one user said “the soul is gone”. Coders on Hacker News say real-world performance doesn’t match the benchmark hype. And Claude still produces noticeably better polished output for things like memos, narratives, and strategy docs. So the play for operators is quite clear:

route your hardest reasoning and agentic tasks to Gemini 3.1 Pro,

keep Claude for the writing-heavy stuff, and

stop pretending any single model wins everywhere.

The numbers that matter:

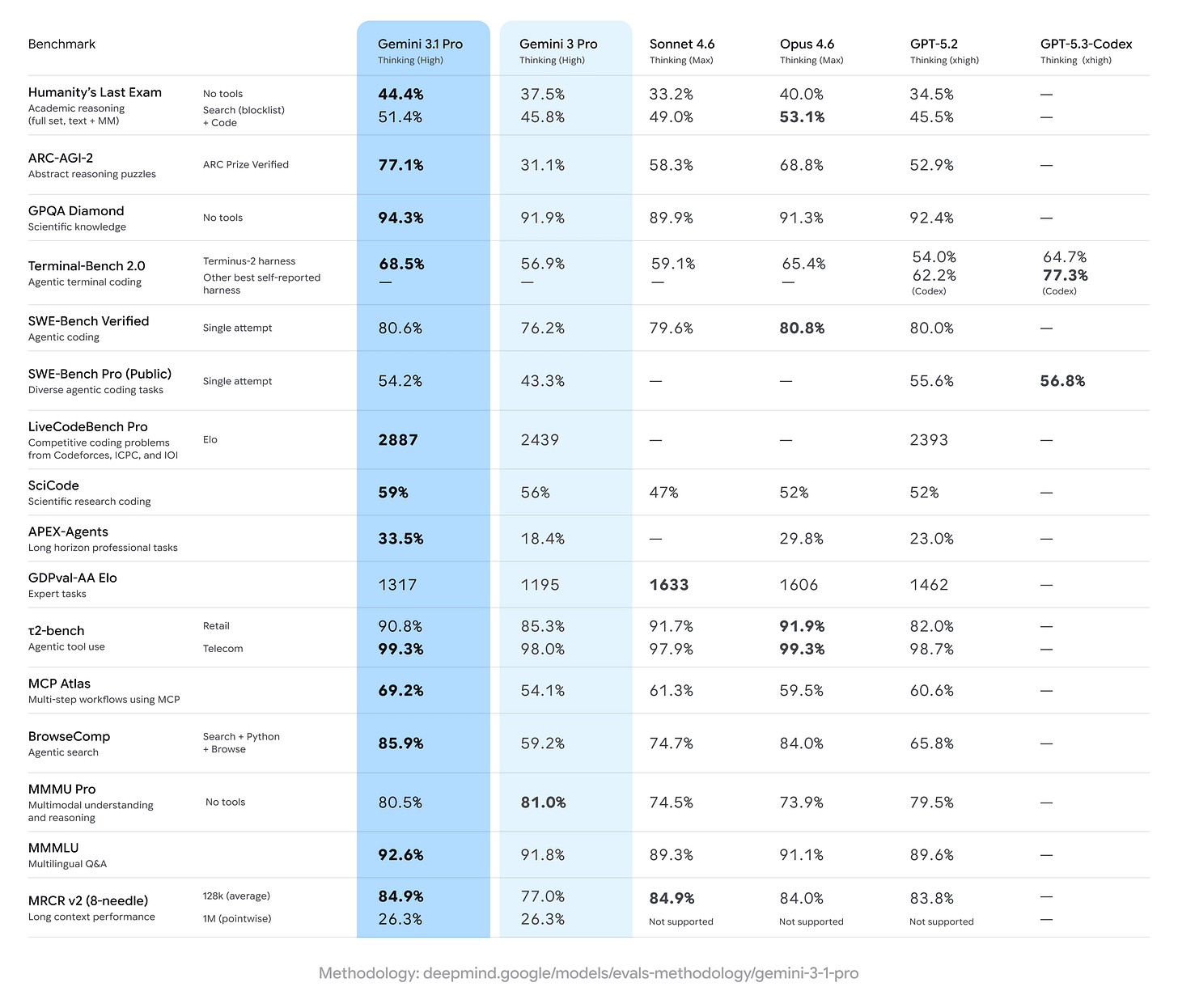

ARC-AGI-2: 77.1% — up from 31.1% on Gemini 3.0 Pro (next best: Opus 4.6 at 68.8%)

GPQA Diamond (grad-level science): 94.3% — highest ever recorded

APEX-Agents (real-world tasks): 33.5% — nearly doubled, beating all competitors

LiveCodeBench Pro Elo: 2,887 — a ~500-point jump

Telecom domain (τ2-bench): 99.3% — tied for best ever

SWE-Bench Verified (coding): 80.6% — essentially tied with Claude and GPT-5.2

So How Does This Actually Change Your Life?

A benchmark doubling doesn’t make your Tuesday easier. What changes is what you can delegate.

Gemini 3.1 Pro’s real leap is agentic, it can now chain together multi-step tasks, browse, call APIs, and build things end-to-end without falling apart halfway through. The frontier isn’t about smarter chat anymore; it’s about AI that does work instead of just talking about it.

Where it helps:

Complex research synthesis across dense, technical sources » 1M token context window handles entire codebases or paper collections in a single pass

Multi-step automations and data pipelines with fewer broken runs

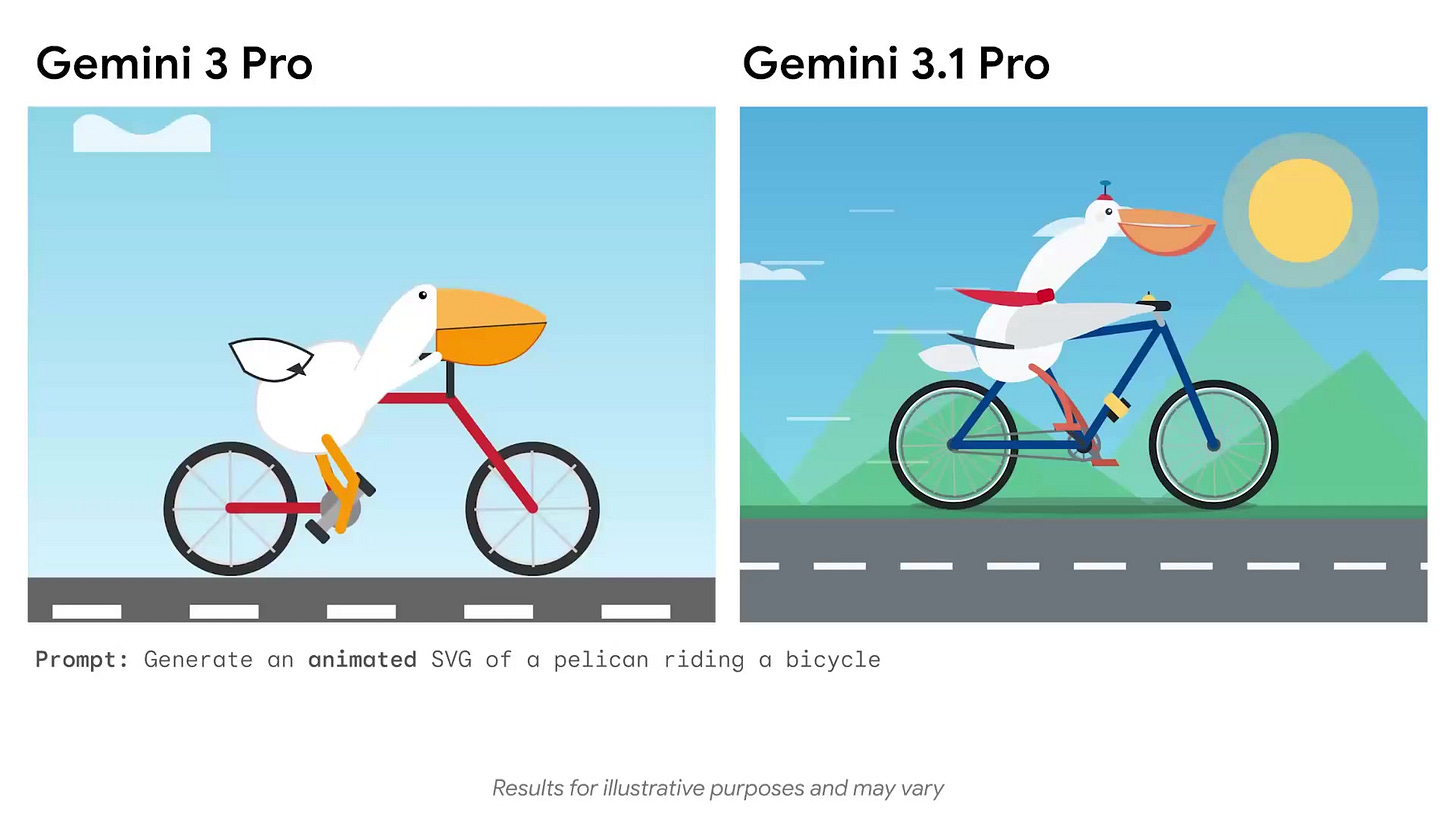

Code-based animated output » SVGs, dashboards, interactive tools from a single prompt, all vector-based and embeddable

Output truncation is fixed » a persistent complaint with 3.0 Pro that’s now resolved

Where it doesn’t:

Daily AI conversations feel flatter » personality and creative nuance got traded for reasoning

Long context drifts on 150K+ token documents

Knowledge cutoff is January 2025, it doesn’t know the last 13 months

Claude still wins on knowledge-intensive work (GDPval-AA), tool-augmented reasoning, and computer use via GUI

Its worth noting though that: API pricing is $2/M input tokens and $12/M output tokens under 200K context meaning roughly half the cost of Claude Opus 4.6.

A new thinking_level parameter lets you dial reasoning between low, medium, high, and max, so you can trade speed for depth per task. And it’s already live in the Gemini app, NotebookLM, AI Studio, Gemini CLI, and Vertex AI, though the free tier defaults to Flash, not Pro.

The Takeaway

Nothing diferent from what we have already established.

We’re past the era of picking one AI model and going all-in. Gemini 3.1 Pro is now the best reasoner and the best agent runner in the room, at half the price of its closest rival. But it writes like an engineer, not a storyteller.

The smart move in 2026 isn’t loyalty to a single model. It’s building workflows that route the right task to the right model, hard thinking to Gemini, polished writing to Claude, speed jobs to Flash. The AI race isn’t about who’s “best” anymore. It’s about who uses them best.