Nemotron 3 Super is Nvidia's 1M‑Token Open‑Weight Model Hiding in Plain Sight

Now live in Perplexity and across major platforms, Nemotron 3 Super shows how open‑weight, long‑context models are becoming the new default for agentic AI.

The most interesting frontier model Perplexity quietly added this week isn’t from OpenAI or Anthropic. It’s Nvidia’s Nemotron 3 Super, a 120B‑parameter, open‑weight model with a native 1M‑token context window and Open‑weight here means enterprises can fine‑tune and deploy it on their own infrastructure, not just via a single vendor API. Its available today inside Perplexity, on OpenRouter, Hugging Face, Together, and of course on build.nvidia.com. Here’s why this 1M‑token, open‑weight, everywhere‑you‑need‑it part matters more than the 120B headline number X is going with.

Before talking about why this matters, it’s worth asking a simple question: where can you actually use Nemotron 3 Super today?

Where you can actually run it?

Perplexity: Nemotron 3 Super 120B with Thinking is in the Perplexity advanced models lineup for Pro/Max. it’s orchestrated alongside ~20 other models in Computer and available via API.

Nvidia: Hosted on build.nvidia.com and as a Nemotron 3 Super NIM micro-service, so it runs from workstations to cloud DGX.

Open platforms:

Hugging Face: open weights plus Transformers/vLLM integration and Dell/HPE enterprise hubs.

OpenRouter: exposed as a public endpoint among other leading open‑weights.

Together AI, Baseten, others: dedicated inference offerings emphasizing 1M‑token, high‑throughput, multi‑agent workloads.

Once you know it’s everywhere, the next question is how Nemotron actually stacks up against other long‑context models.

Key comparative points -

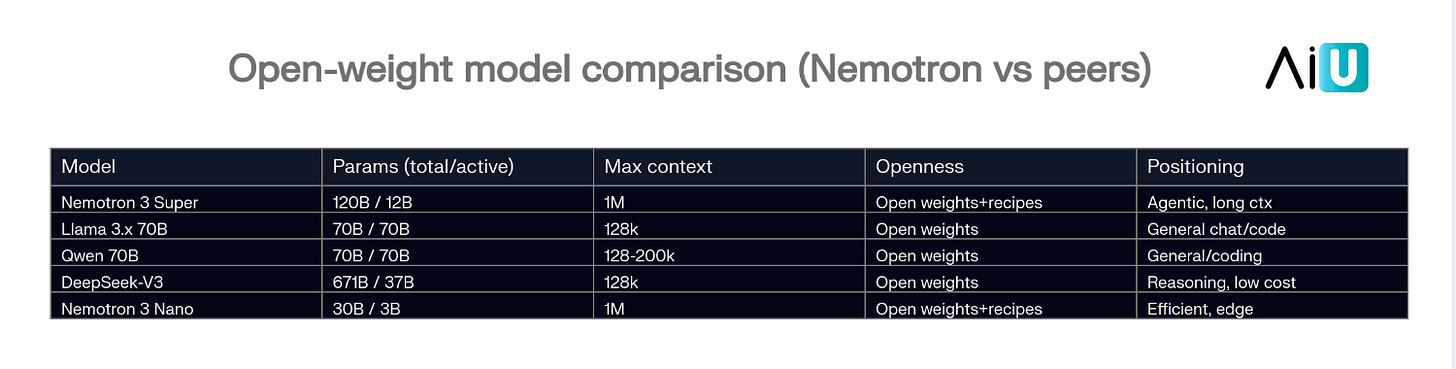

1M‑token context: Nemotron is one of the first mainstream open‑weights with a native 1M context across Nano and Super, using Mamba layers to keep memory constant as sequence length grows.

MoE efficiency: Despite 120B parameters, only ~12B are active per token, giving Nvidia claimed 5x throughput and ~50% faster generation vs comparable open models in multi‑agent workloads.

Open‑weight plus recipes: Nvidia isn’t just shipping a checkpoint; they’re publishing datasets, training recipes, and an open Nemotron license aimed at enterprise fine‑tuning and on‑prem.

But Nemotron isn’t just another big checkpoint; it slots neatly into Nvidia’s broader ‘agentic AI stack’ story. -

[ In this newsletter you get sharp, unfiltered short essays; for full‑length, deep‑dive analysis on AI, subscribe to our companion publication, Intelligent Founder AI. ]

What 1M tokens unlocks?

So what does a native 1M‑token window actually buy you in practice?

you can treat an entire service or codebase as a single unit of reasoning: load tens of thousands of files into one context and ask Nemotron to map dependencies, spot dead code, or propose refactors without brittle chunking.

multi‑agent workflows get simpler: a planner, critic, and executor can all share the same long‑lived scratchpad, so you don’t keep throwing away state every few thousand tokens.

you can build ‘document brains’ that actually see everything: full policy libraries, SOPs, or product manuals go into one window, instead of wrestling with RAG configs and retrieval edge cases.

Those are the workflows Nemotron is aiming at, and they line up cleanly with Nvidia’s broader ‘agentic AI stack’ story.

The Nvidia narrative:

Nemotron 3 is framed as the model layer of Nvidia’s “agentic AI stack” (GPUs → CUDA → NeMo/NIM → Nemotron).

The 1M‑token angle is about making multi‑agent and long‑horizon workflows economically viable: entire codebases, full document stores, or long‑running plans in a single context window.

The $26B, five‑year plan is about turning “open‑weight Nvidia models” into the default choice across clouds, on‑prem vendors (Dell, HPE), and AI‑native companies like Perplexity.

If CUDA was how Nvidia captured the AI compute stack, Nemotron is its attempt to do the same at the model layer, except this time the weights are open and already running behind your favorite apps. In other words, the next default enterprise model may not be the one with the loudest launch, but the open‑weight one that quietly ships everywhere first.