No clear sign of mass job loss so far, says Anthropic.

Anthropic’s new labour market study finds AI is reshaping white‑collar work long before it shows up as mass unemployment.

If you hang around AI Twitter or LinkedIn, you’d think we’re either weeks away from AI wiping out white‑collar work, or on the verge of a productivity utopia. Anthropic’s new paper, “Labor market impacts of AI: A new measure and early evidence,” cuts through that noise with a simple message: so far, there is no clear sign of mass job loss from AI in the data.

That doesn’t mean “NO IMPACT.”

It means the way AI is reshaping work is slower, more targeted, and more subtle than the loudest headlines suggest.

From “AI could do this” to “AI is doing this” -

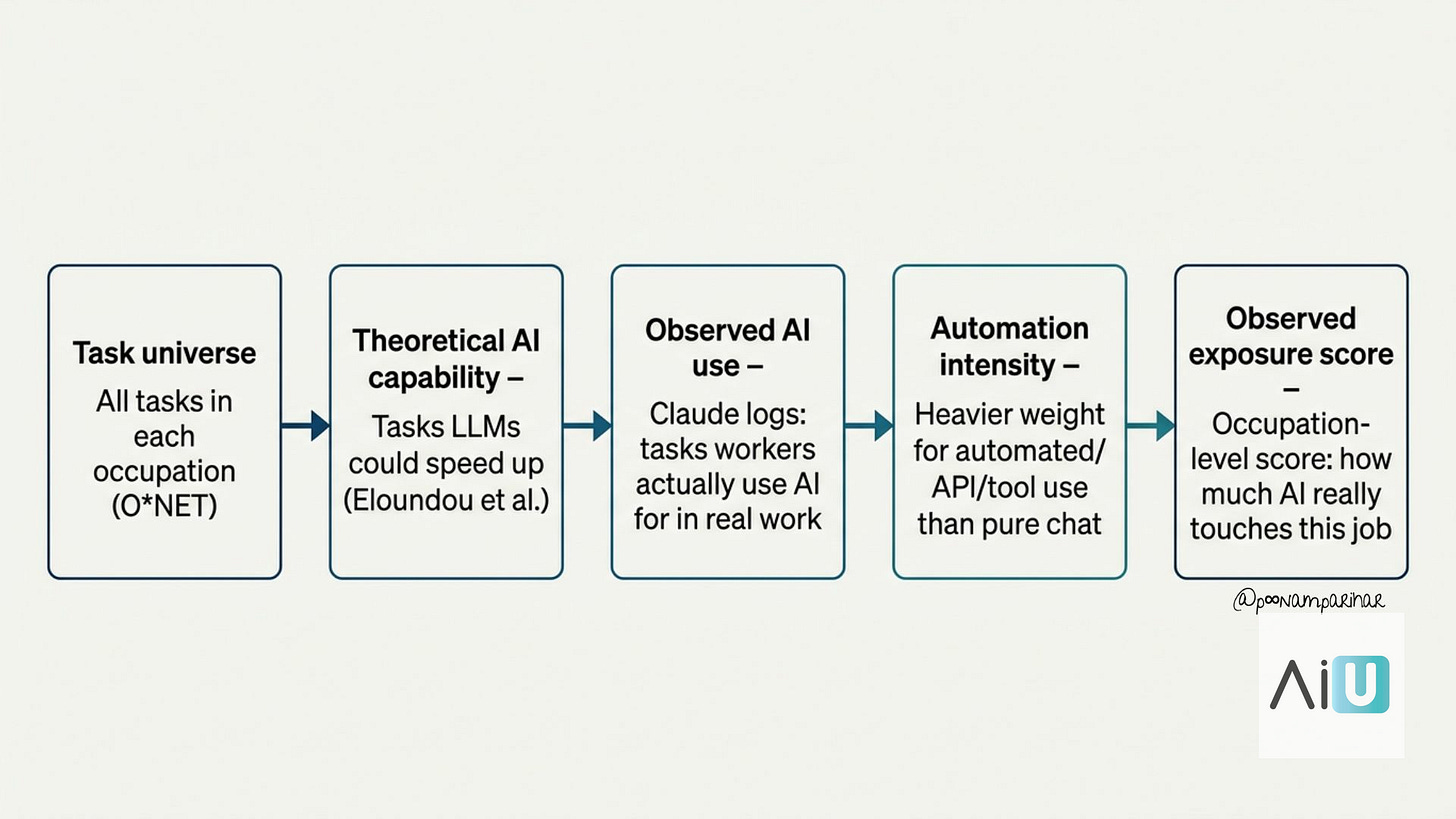

Most earlier studies ask: “Which tasks could AI help with?”

They look at detailed task lists for hundreds of jobs (from databases like O*NET), then rate how much large language models could speed them up. That gives you a theoretical exposure score.

Anthropic keep that idea but add something important:

they use real usage data from Claude to see which tasks people are actually using AI for in their day jobs.

They combine three ingredients:

Task lists for ~800 US occupations (O*NET)

An earlier measure of which tasks are technically feasible for LLMs

Millions of Claude conversations and API calls tagged as work‑related

From this, they build “observed exposure” – basically:

out of all the tasks AI could help with, where is AI already being used in professional settings, especially in automated or semi‑automated ways?

The result? -

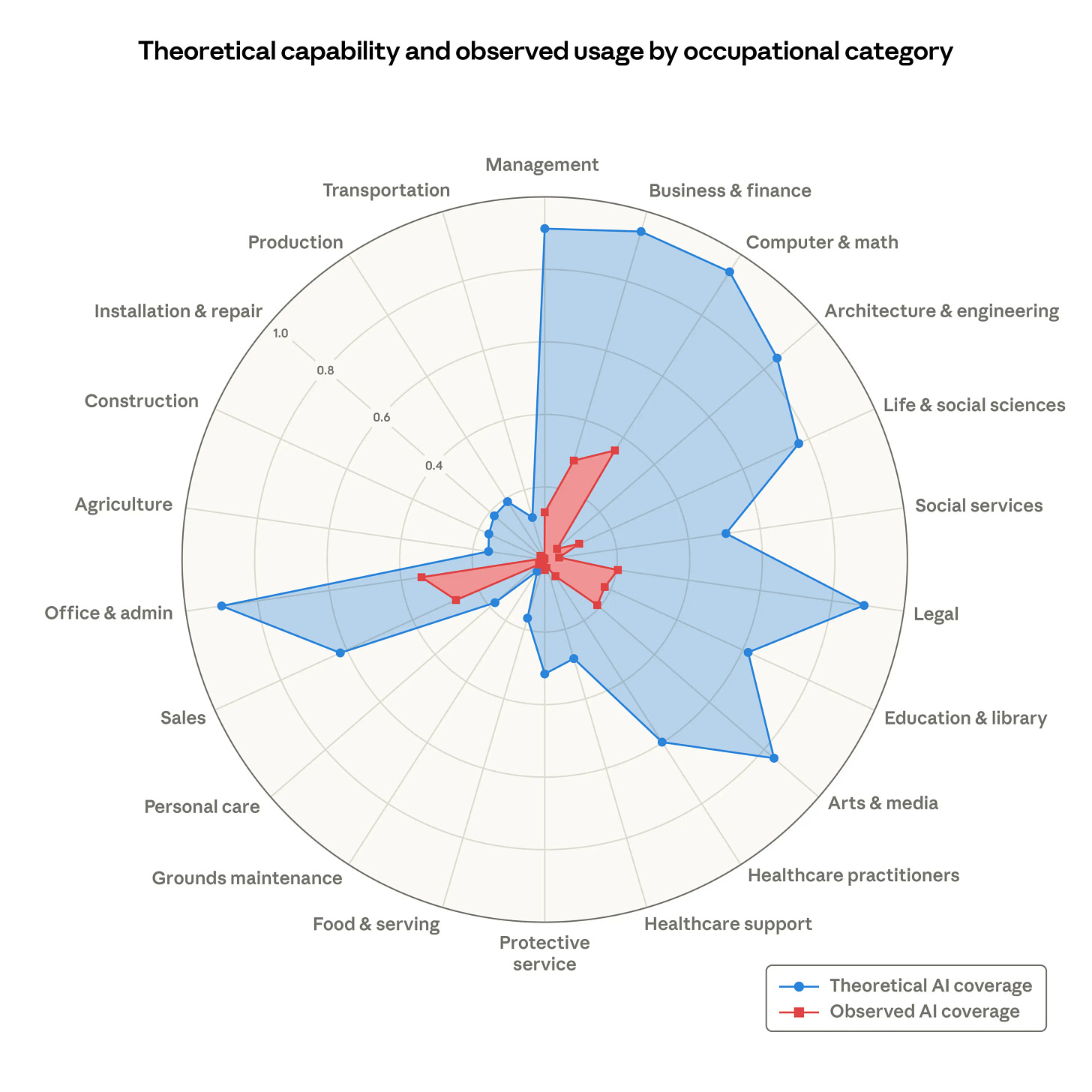

AI is far from its theoretical ceiling. Even in tech‑heavy jobs like software and data roles, AI is currently touching only part of the work. Claude, for example, is used on about a third of tasks in Computer & Math occupations, even though far more tasks look technically doable on paper.

Who is most exposed right now?

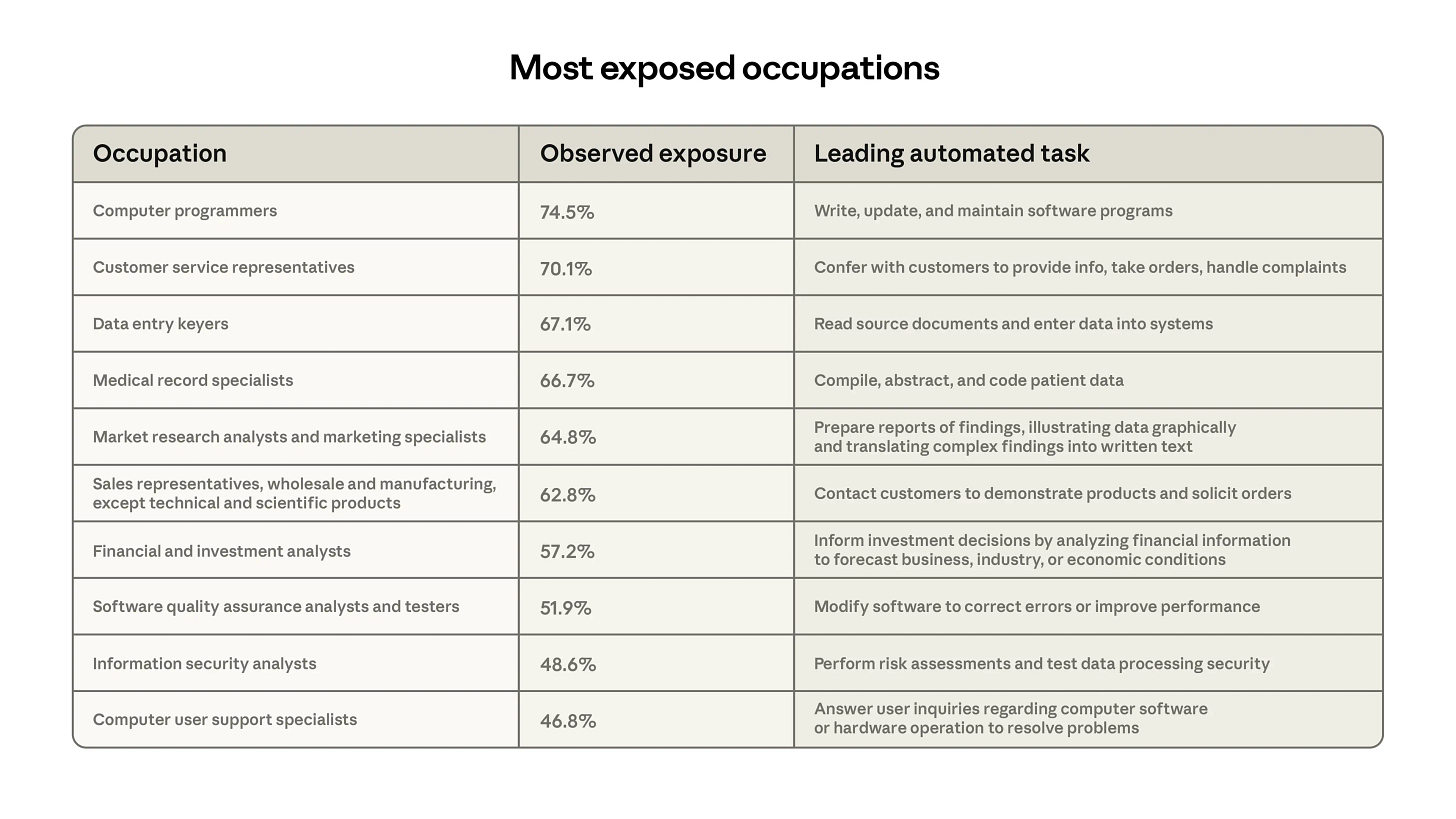

When Anthropic roll this up to full jobs, the pattern is clear.

High observed exposure: computer programmers, customer service reps, data entry workers are at the top. Programmers show around 75% coverage of their tasks, data entry sits around 67%.

Low or zero exposure: roughly 30% of workers are in jobs where AI barely shows up in the data. Think cooks, lifeguards, bartenders, mechanics, dishwashers, dressing room attendants.

The demographics of the “high exposure” group may surprise you. These workers are more likely to be:

Older

Female

White or Asian

Better educated

Higher‑paid

People with graduate degrees are almost four times as common in the highly exposed group as in the unexposed group.

So the first wave of AI impact is not focused on low‑wage workers.

It’s landing on office workers, coders, analysts, and other professionals.

Anthropic also compare their exposure measure to official US forecasts for job growth out to 2034. Jobs with higher observed exposure are projected to grow a bit more slowly.

a 10‑point increase in exposure is linked with about a 0.6‑point drop in projected growth, while a purely theoretical exposure metric doesn’t show this link. That suggests their “observed” measure is picking up something real about where pressure is building.

So, are people losing jobs to AI?

This is the core question. Anthropic tackle it directly by looking at unemployment.

They split workers into two groups:

People in the most AI‑exposed occupations

People in occupations with effectively zero AI exposure

Then they track unemployment rates for both groups, using US survey data, before and after late 2022 (i.e. the ChatGPT era).

Findings?

No clear sign of mass job loss so far. Unemployment rates for highly exposed workers have not climbed sharply compared to the unexposed group. Any differences are small and not statistically meaningful.

This result holds across different exposure cut‑offs and even when they use other data sources, like unemployment insurance claims.

They are also asking if this framework pick up a big shock if one happened? The answer appears to be yes. A “Great Recession for white‑collar workers” – where unemployment in exposed jobs doubles from around 3% to 6% – should clearly show up in their approach. It simply isn’t there.

The one wrinkle is young workers. Earlier research suggested that people aged 22–25 might be finding it harder to get into AI‑exposed roles. Anthropic find something similar:

Unemployment for young workers in exposed jobs is mostly flat.

But when they track job moves over time, the share of young workers starting new jobs in highly exposed roles seems to fall in 2024. They estimate roughly a 14% drop in “job‑finding” into these roles versus 2022, and this doesn’t show up for workers over 25.

The authors stress this is only weakly significant and measurement is tricky. But it fits a common‑sense story: companies don’t instantly fire lots of knowledge workers; they quietly hire fewer juniors into roles where AI is boosting productivity.

[ In this newsletter you get sharp, unfiltered short essays; for full‑length, deep‑dive analysis on AI, subscribe to our companion publication, Intelligent Founder AI. ]

What this means for the AIUnfiltered crowd? + How to PERSONALIZE Anthropic’s framework.

Anthropic’s framework is a measurement tool, but we can treat it like a radar for our own jobs and companies. Instead of asking “Will AI take my job?”, a better question is: “How much of my work already looks like the tasks Anthropic see AI touching today?”

Now, If you read AIunfiltered, you’re almost certainly in the high‑exposure zone:

building software,

running operations,

writing content,

handling customers,

doing analysis.

Anthropic’s work suggests three practical takeaways.