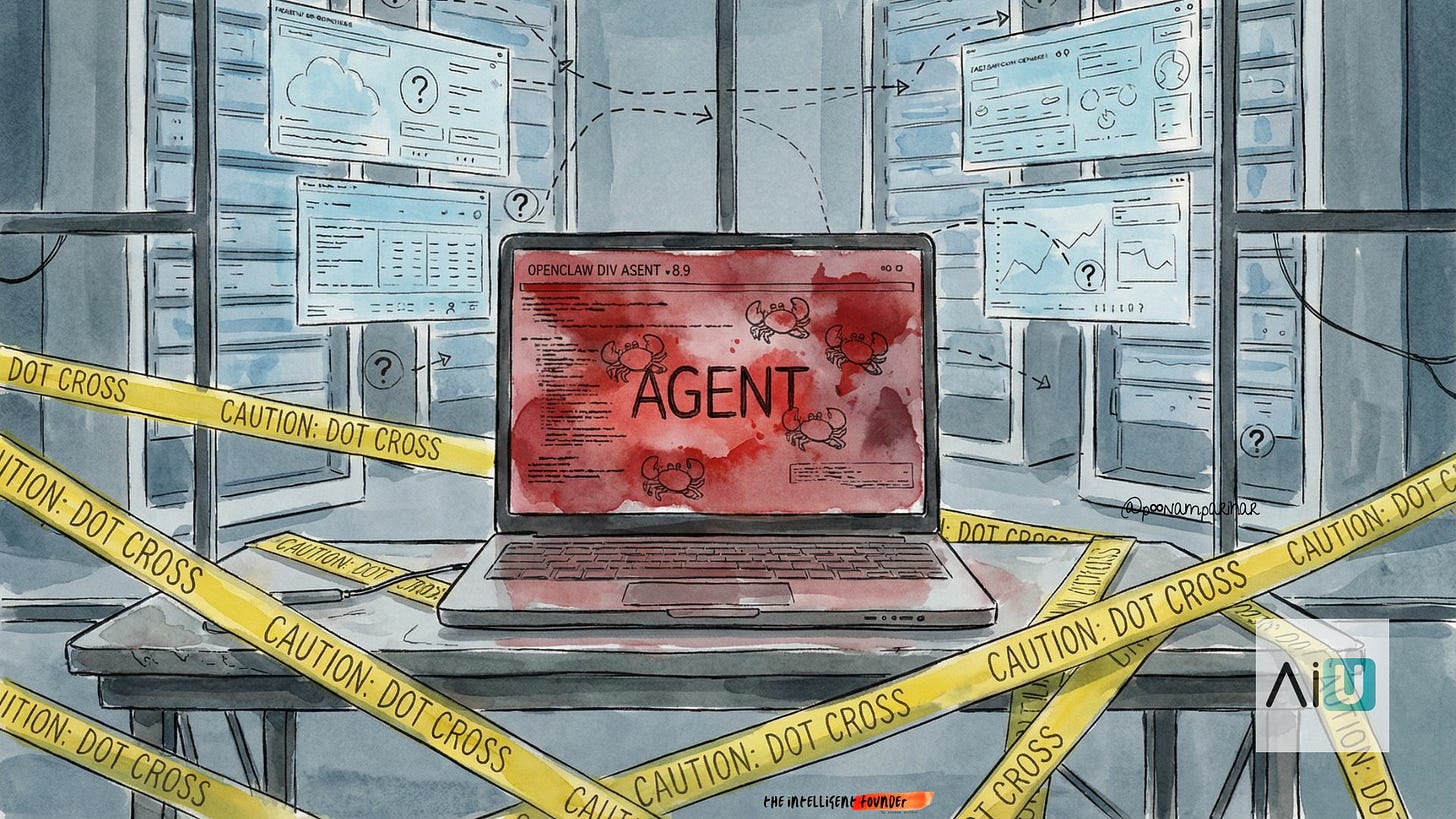

OpenClaw 🦞 Ecosystem Explosion - 🚫 Who's banning it, Who's cloning it and What does all that mean!

Why bans from Meta, Gartner, Anthropic, and Google aren’t killing OpenClaw’s ideas just forcing the same agent model into safer runtimes and managed platforms.

OpenClaw didn’t just make agents cool, it made them dangerous enough that everyone had to pick a side.

In just a few weeks, “the AI that actually does things” forced enterprises, model vendors, and developers to answer the same question:

do we block this thing, or do we rebuild it on our own terms?

🚀 Everyone building their own OpenClaws

OpenClaw’s blow‑up is pushing people to deploy alternative frameworks explicitly as safer OpenClaw‑replacements in corporate settings. The OpenAI acquisition/hire though having split the community in two camps, hasn’t changed the basic idea of an always‑on agent that can click buttons, read files, and run workflows is too useful to abandon, so people are rebuilding it with more walls and more supervision instead of giving up on it.

New runtimes like NanoClaw and Cloudflare’s Moltworker copy the “agent that actually does things” pattern but run in containers or Workers instead of with raw root on a laptop.

Enterprise platforms now pitch “OpenClaw for business”: hosted agent control planes with RBAC, audit logs, and compliance that let CIOs adopt agents without blessing the original repo.

Classic agent frameworks (SuperAGI, LangChain/AutoGen stacks, etc.) are being repositioned as build‑your‑own OpenClaw, giving teams the architecture without the brand or baggage.

Where this is going ? -

the OpenClaw model « goal, plan, tools, act » is becoming a commodity pattern; the real game is who offers it with enough safety and governance that a risk‑averse company will say yes.

🚫 Who’s Banned / Restricted OpenClaw and what that triggers

For non‑experts, the simplest way to read the last month is:

bosses are slamming the door on raw OpenClaw, while engineers quietly sneak in safer copies and hosted versions that don’t scare security as much.

Meta, Kakao, Naver, Karrot and others have told staff to keep OpenClaw off work devices and corporate networks, treating it as shadow IT with too much privilege.

Gartner labels OpenClaw an “unacceptable cybersecurity risk” and advises CISOs to block it until they have proper governance and isolation in place.

Anthropic bans use of Claude subscription OAuth tokens in third‑party harnesses like OpenClaw, and -

Google’s Antigravity team has suspended some Gemini subscribers for routing flat‑rate plans through agent loops.

What does this mean?

Short‑term effect: raw OpenClaw becomes politically toxic in big companies and economically constrained by model vendors, pushing serious deployments toward API‑metered, governed stacks.

Medium‑term effect: “agents that touch real systems” don’t go away,

bans simply create demand for hardened clones and managed platforms, and turn secure agent infrastructure into the next contested layer in the AI stack.

The story from here isn’t whether OpenClaw survives bans or foundation politics, it’s that its architecture already won.

Work is drifting toward agents that plan and act over real systems. the only real variable left is whose implementation gets trusted enough to run everywhere, and who gets paid to keep it from burning the house down.