Sam Altman’s Pentagon Love Letter Might Age Worse Than He Thinks

Anthropic takes the hit now, OpenAI takes the leash later.

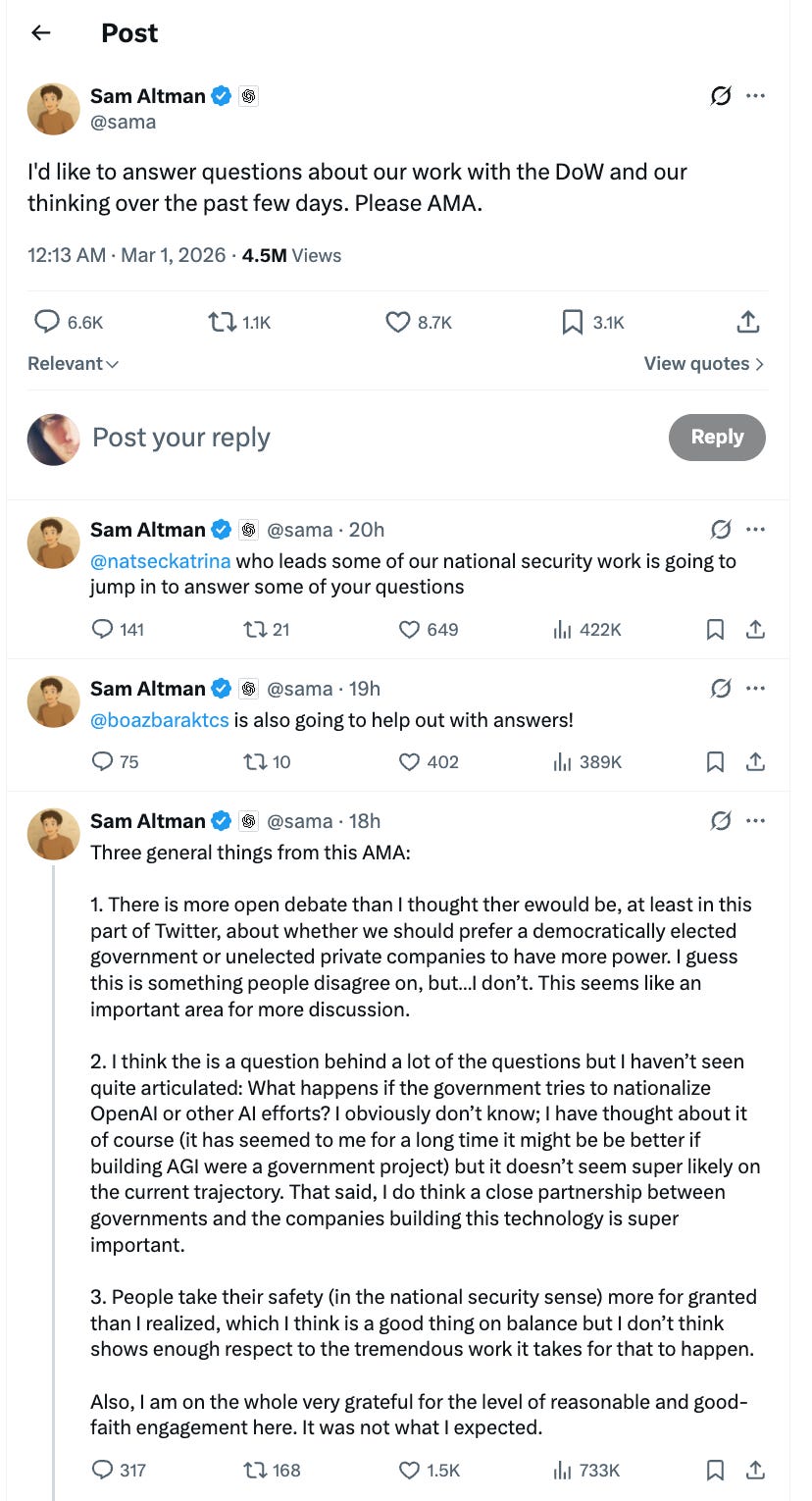

1. Sam Shows Up on Twitter To Prove He’s a Home Boy

Sam Altman popped onto X for an impromptu AMA about OpenAI’s new deal with the U.S. Department of War, and the core message was simple:

we love America,

we trust U.S. institutions, and

we’ll support “all lawful” military uses of our tech.

He stressed that OpenAI shares similar “red lines” with Anthropic, BUT where Anthropic walked away, he believes contracts, laws, and the ability to shut systems down are enough to stay safe while working with the Pentagon.

link to AMA tweet - https://x.com/sama/status/2027900042720498089

2. The AMA Doesn’t Change Anyone’s Mind

The replies under that AMA were dominated by skepticism and anger, and his answers did little to change minds.

Critics pushed him on civilian casualties, “murder by algorithm,” regulatory capture, and whether “lawful” is just a fig leaf when the government itself sets the law. A few VCs and nat‑sec types asked friendly, technical questions, but the overall vibe was closer to damage control in a hostile room than a persuasive conversation that shifted positions.

3. Anthropic Gets Frozen at Home, Boosted Abroad

While Sam was explaining why OpenAI stayed in the tent, Anthropic was being frozen out of the U.S. government for refusing to drop two guardrails:

no domestic mass surveillance and

no fully autonomous lethal weapons.

Trump has ordered all federal agencies to stop using Anthropic, and the Pentagon labeled them a “supply chain risk,” effectively blacklisting them across the defense ecosystem. In the short term, that looks brutal for Anthropic.BUT if you see it from outside America perspective, it reads as:

“we walked away from killer robots and mass surveillance, even when it cost us.”

4. Did the Pentagon Just Lock Out the Better Model?

If Claude continues to be seen as stronger on reasoning and alignment, the Pentagon has just voluntarily cut itself off from one of the most capable and safety‑focused systems on the frontier stack.

Anthropic can now position itself as the premium, high‑integrity option for non‑U.S. governments, corporates, and NGOs that don’t want to be hard‑wired into U.S. military supply chains. Meanwhile, the U.S. security apparatus has signaled to future labs: push back on our terms and we will nuke your access exactly the opposite incentive you’d want if you really cared about independent safety judgement.

5. OpenAI Wins a Deal, and a Cage.

OpenAI gets the short‑term prize:

government contracts,

privileged access, and

the status of “trusted patriotic vendor.”

The price is that Sam has publicly tied OpenAI’s red lines to whatever U.S. law and policy say is acceptable, and promised not to turn systems off over mere moral disagreement with “legal military decisions.”

From a more sympathetic viewpoint, you can argue this keeps OpenAI “inside the system” where it can at least add safety input, but it also means the ultimate boundary of “too far” is set in Washington, not in the lab. That’s leverage for the state and a subtle cage for the company, whether they recognize it yet or not.

In this newsletter you get sharp, unfiltered short essays; for full‑length, deep‑dive analysis on AI, subscribe to our companion publication, Intelligent Founder AI.

Catch Up on The Latest Posts:

OpenClaw Ecosystem Explosion - Who’s banning it, Who’s cloning it and What does all that mean!

$10B Gets Wiped Out Because Wall Street Doesn’t Know What a Firewall Is.

Gemini 3.1 Pro Just Dropped. Here’s What the Numbers (and Users) Actually Say.

NotebookLM just rolled out Prompt-Based Revisions & PPTX Support

Follow us on Linkedin here.