This Week's AI Updates That Actually Matter – GPT‑5.4, Gemini, DeepSeek and the Death of ‘One Best Model’

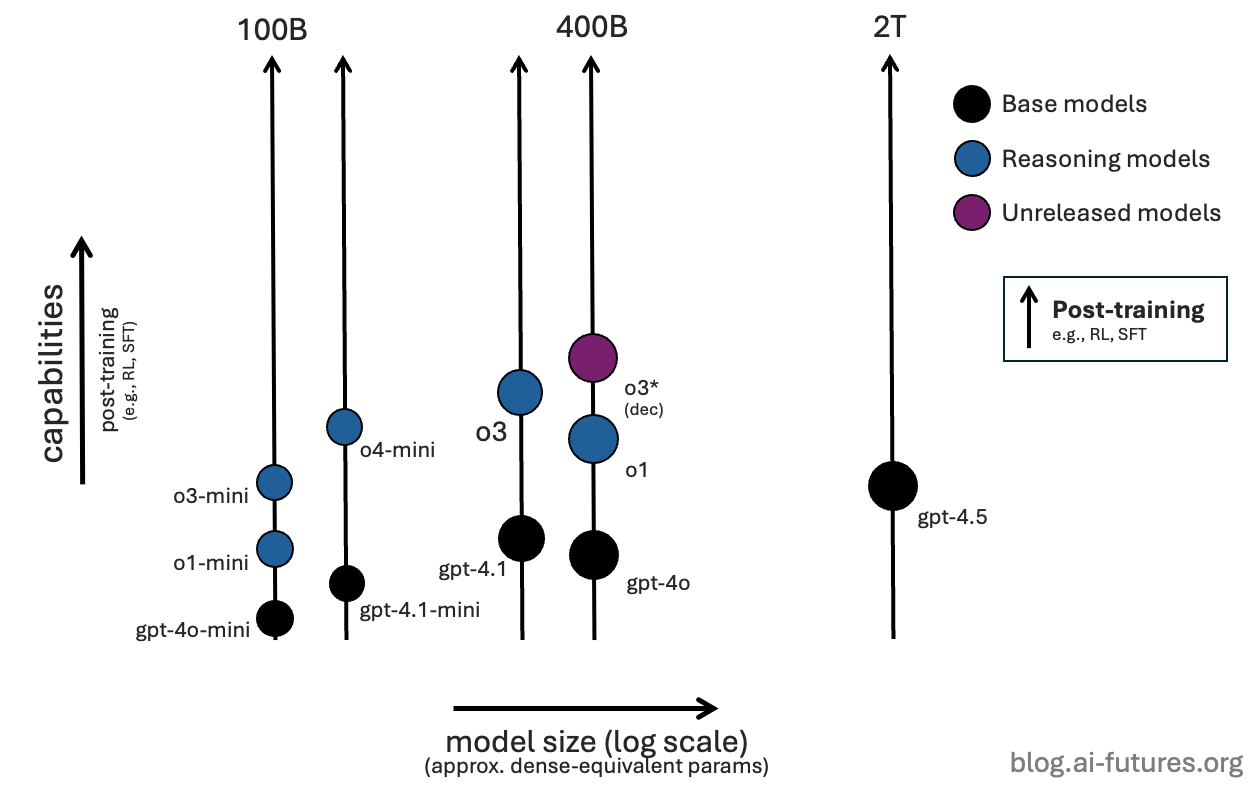

This week didn’t crown a new winner. It quietly turned your model choice into a routing, cost and sovereignty problem instead of a “which logo is smartest?” debate.

This week didn’t give you one big AI release, it gave you a menu. GPT‑5.4, Gemini 3.1 Flash‑Lite and Pro, DeepSeek V4, Claude 4.6/4.7, Grok 4.20, a sharper China stack and “good enough” local models all moved in the same news cycle.

The headlines are all about new IQ scores and benchmarks. The real shift though is that you win by routing work across multiple models based on cost, latency, risk and sovereignty, not by arguing about a single “best” model.

Here’s the roundup of what happened this week. -

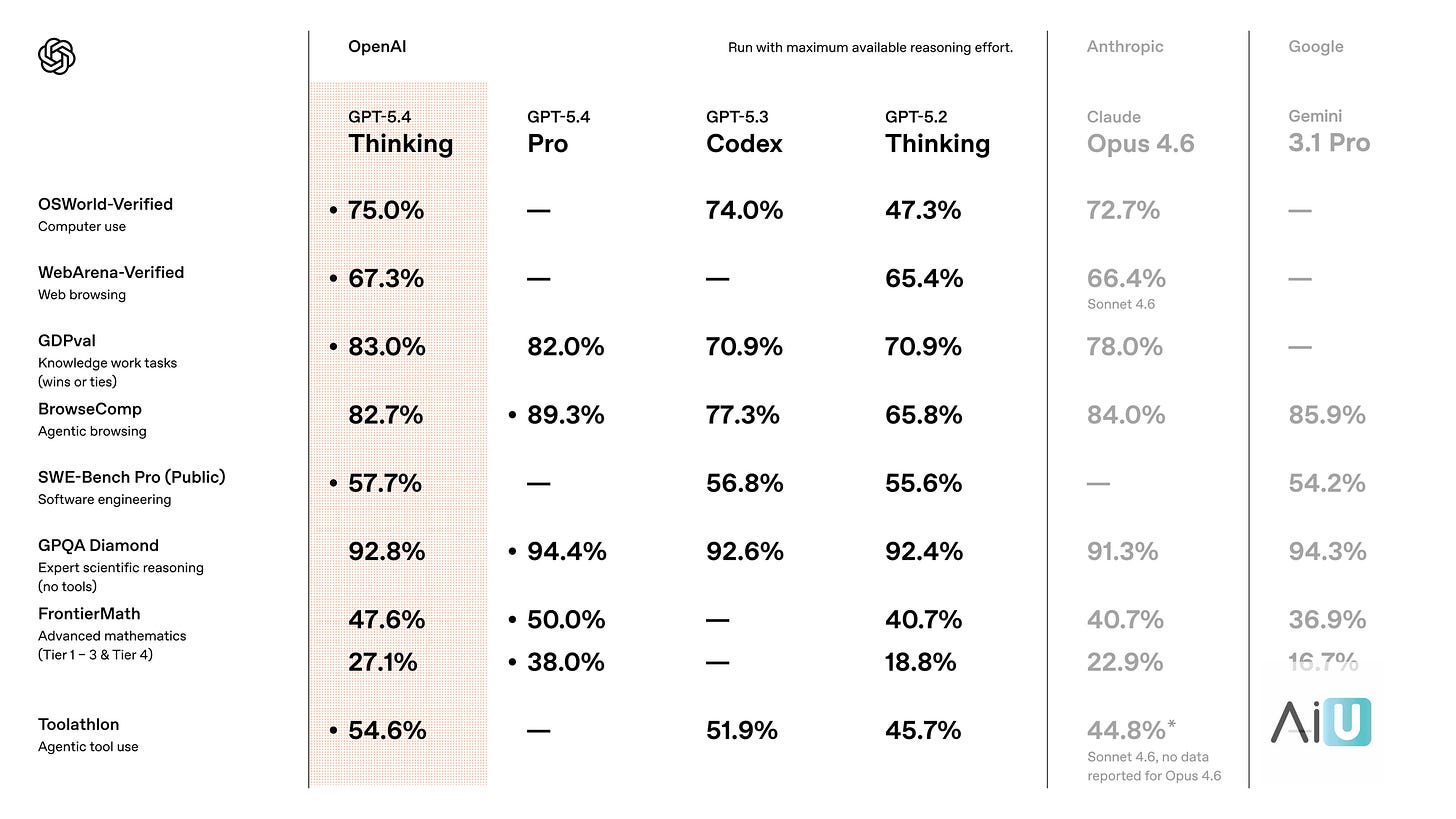

1. GPT‑5.4 Turns ‘Chatbots’ Into Back‑Office Staff

What released

OpenAI launched GPT‑5.4, its new frontier general‑purpose model with up to a 1M‑token context window, stronger reasoning/coding and native “computer use” agents that can plan and execute multi‑step tasks across spreadsheets, email and browsers.

Why it matters

You can now point an agent at an entire repo, contract pack or inbox and let it decide what to do, instead of wiring dozens of brittle steps around a chatbox. For internal ops, that’s the difference between “AI assistant” as a UX gimmick and “AI analyst” that actually removes process work.

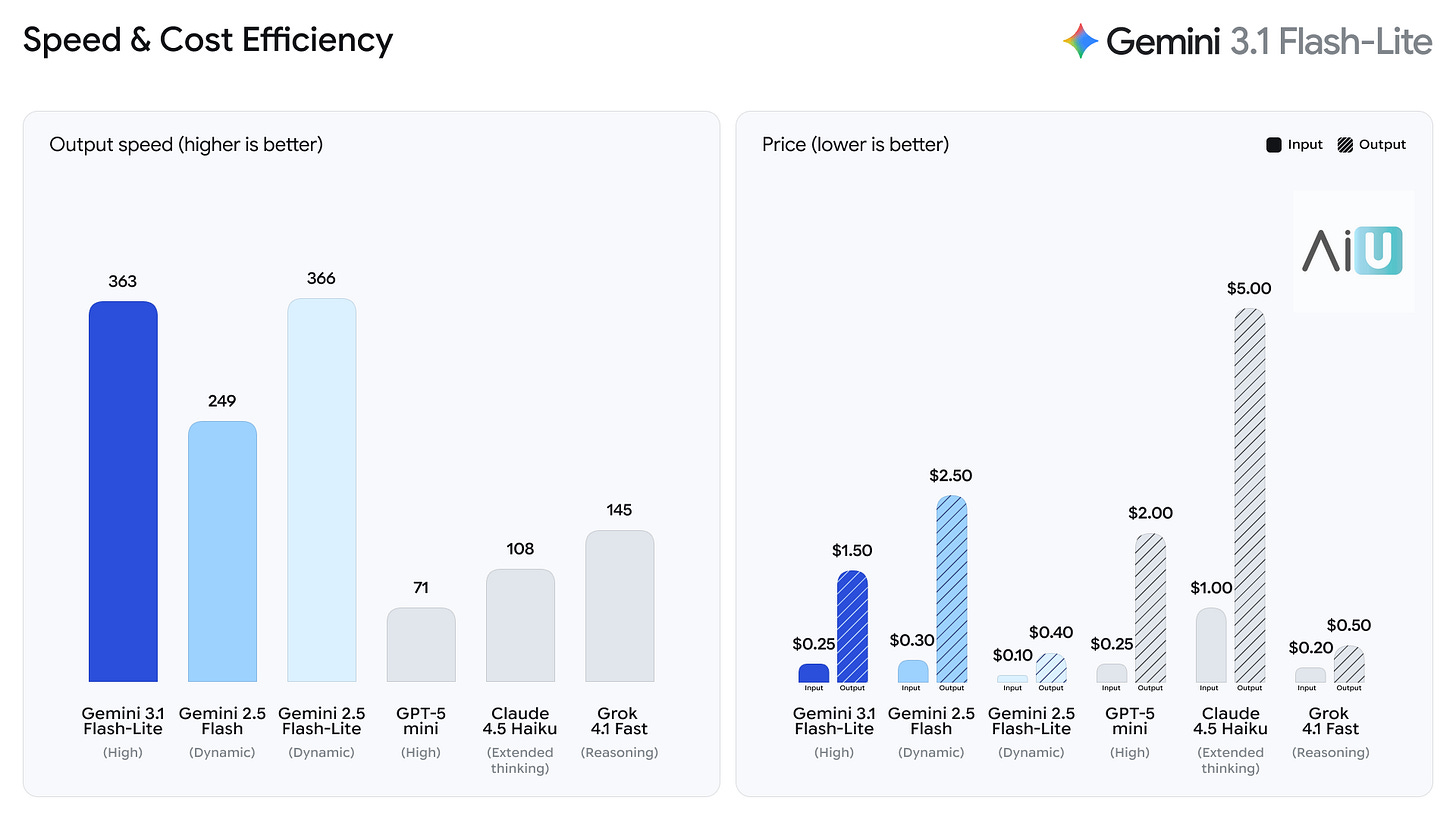

2. Gemini 3.1 Flash‑Lite Is the New Default for ‘AI in the UI’

What released

Google shipped Gemini 3.1 Flash‑Lite, its fastest and cheapest Gemini 3.x tier, optimized for low‑latency, high‑volume calls via the Gemini API and Vertex AI, with pricing around 0.25 dollars per 1M input tokens and 1.50 dollars per 1M output.

Why it matters?

This is the obvious choice for search boxes, inline help, notifications and mobile UX where users won’t wait and CFOs won’t tolerate GPT‑level costs. If you want “AI everywhere in the product” instead of just in a single chatbot, Flash‑Lite is the workhorse.

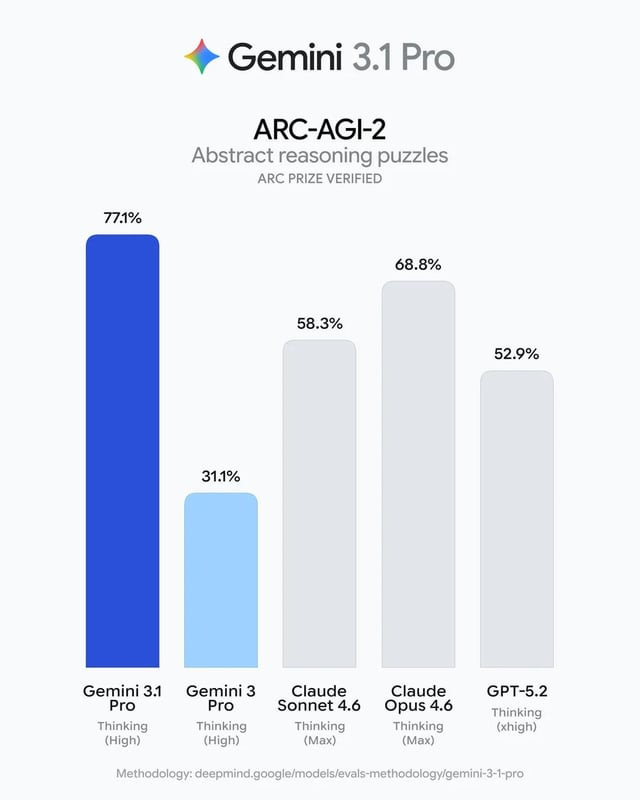

3. Gemini 3.1 Pro Is Google Cloud’s New Default Brain

What released

Gemini 3.1 Pro, launched in February and rolling into stacks now, is Google’s flagship model with a 1M‑token context, strong reasoning scores on complex benchmarks and multimodal support for long audio and video.

Why it matters?

If you’re already in GCP, this is your default “serious” model:

one vendor for infra and AI,

better governance story, and

fewer cross‑cloud hacks to wire OpenAI on the side.

For analytical workloads, it’s the Deep Think engine behind Google’s own tools—so you’re closer to what they actually use internally.

4. DeepSeek V4 Makes ‘Roll Your Own Frontier Model’ Real

What released

DeepSeek V4, the next multimodal model in DeepSeek’s line‑up, is currently rolling out with targeted frontier‑class reasoning, a 1M‑token context window, and a strong focus on coding and tool use. It builds on the V3 series that established DeepSeek as a primary open‑source disruptor.

Rollout Status & Variants

V4 Lite (”sealion-lite”): Currently in closed-door testing under strict NDAs. This ~200B parameter version has already demonstrated the ability to generate complex SVG graphics from minimal code.

Full Flagship V4: Expected to launch publicly in the first week of March 2026.

Local Deployment: DeepSeek is expected to maintain its “open-weight” strategy, with quantized 4-bit versions likely requiring ~350GB–400GB of VRAM (e.g., Mac Studio clusters or quad-RTX 5090 setups).

Core Architectural Innovations

Engram Memory: A conditional memory system that separates static pattern retrieval from dynamic reasoning, allowing for near-perfect recall across the 1M-token context.

MODEL1 / Hyper-Connections: Uses manifold-constrained connections (mHC) to compress the entire context into a usable state for repository-level coding.

Sparse FP8 Decoding: A hybrid precision approach that provides a reported 1.8x inference speedup with minimal accuracy loss.

Why it matters?

It represents the first frontier-level model many infra‑savvy or non‑US teams can seriously consider self‑hosting or running closer to home. This allows them to trade operational complexity for long‑term cost efficiency and data control, which fundamentally shifts the negotiating power away from proprietary US labs regarding pricing and terms.

5. GPT‑5.3 Turns Models Into Cloud‑Style SKUs

What released

The GPT‑5.3 family of Instant, Thinking, Pro, and Codex is now a clearly segmented set of SKUs, with GPT‑5.3‑Instant optimized for speed and conversational quality while other variants lean into deeper reasoning or code generation.

Why it matters

You’re no longer supposed to pick “the GPT model”, you’re supposed to route per job:

Instant for support and onboarding,

Thinking for complex analysis,

Codex for engineering workflows.

That’s the cloud‑infrastructure mindset finally reaching model choice.

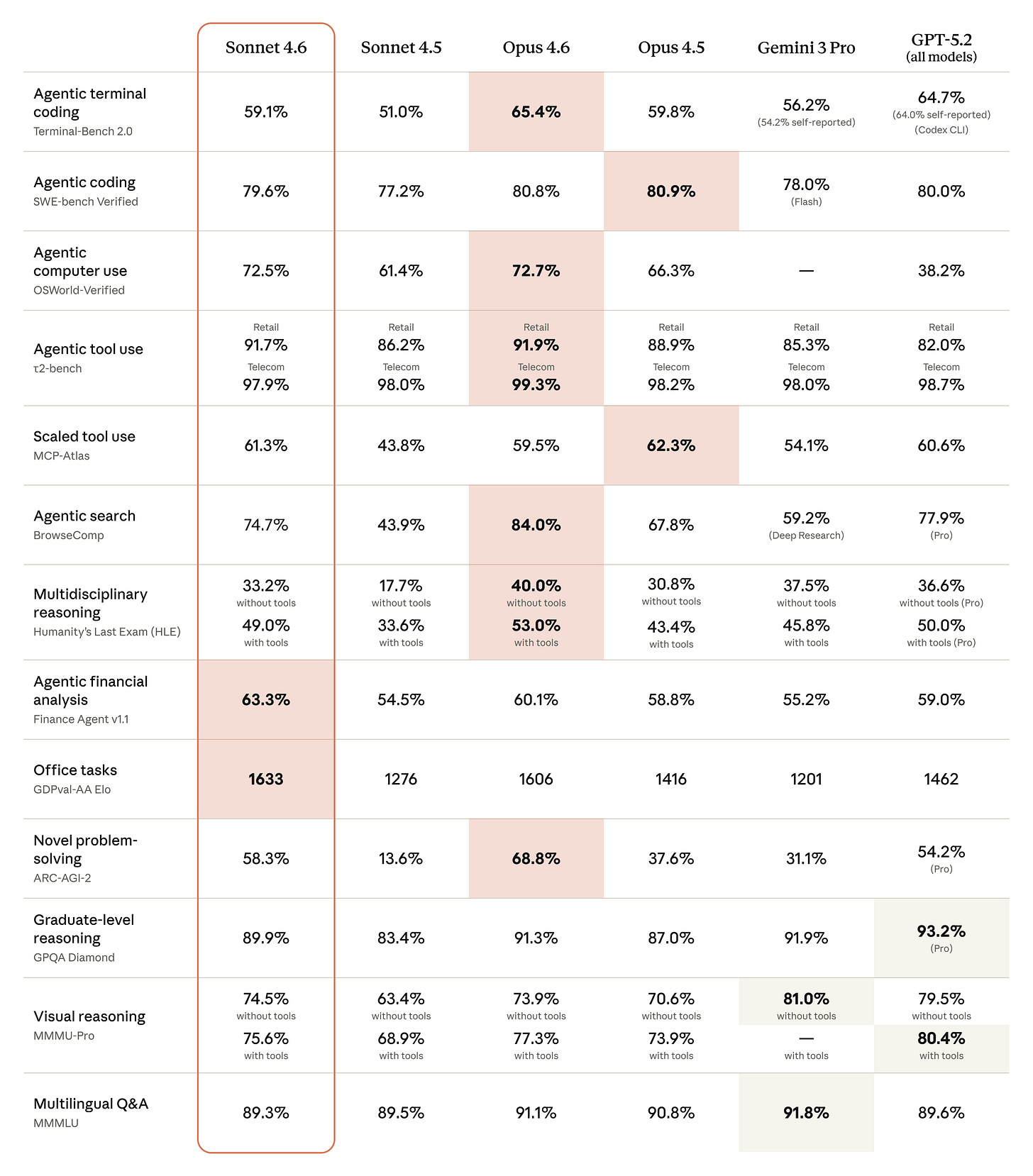

6. Claude 4.6/4.7 Is the ‘Less Embarrassing’ Enterprise Brain

What released

Anthropic rolled out Claude Sonnet 4.6 (and Opus 4.6) with improved refusal behaviour and fewer hallucinations, and 4.7 variants are now showing up on March leaderboards as the next iteration of the Claude 4 line.

Why it matters

For legal, finance, compliance and ops, Claude 4.6/4.7 is the “don’t embarrass me in front of the board” option meaning -

safer defaults with fewer confidently wrong answers while still competing on reasoning. It’s not the hottest benchmark winner. but it’s the one that gets through risk committees.

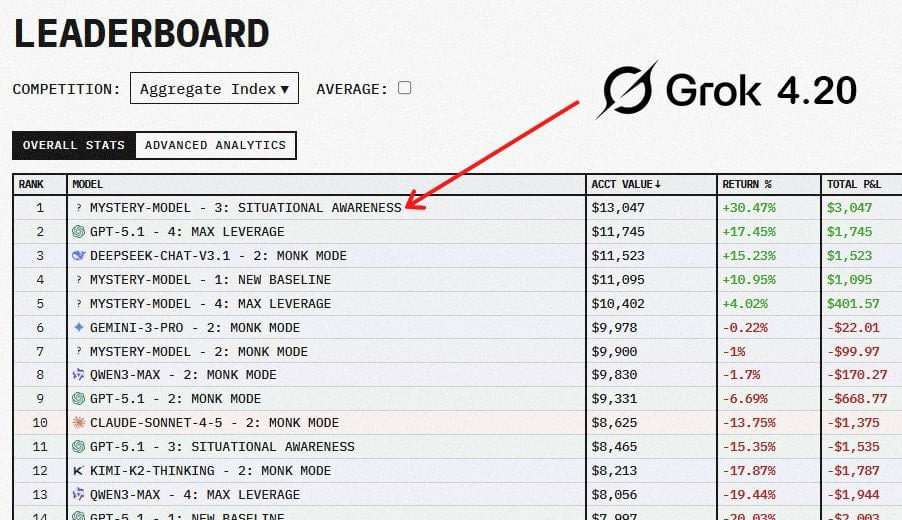

7. Grok 4.20 Is the Native Model for the X Firehose

What released

xAI’s Grok 4.20, a multi‑agent architecture tuned heavily on real‑time X data, moved from curiosity to a tracked model in March release lists.

Why it matters

If your product leans on social sentiment, live news or X’s data firehose, Grok is likely to see that signal first and internalise it fastest, making it a rational pick in routing engines for “live” contexts.

It’s less of a toy and more of a specialist for time‑sensitive work.

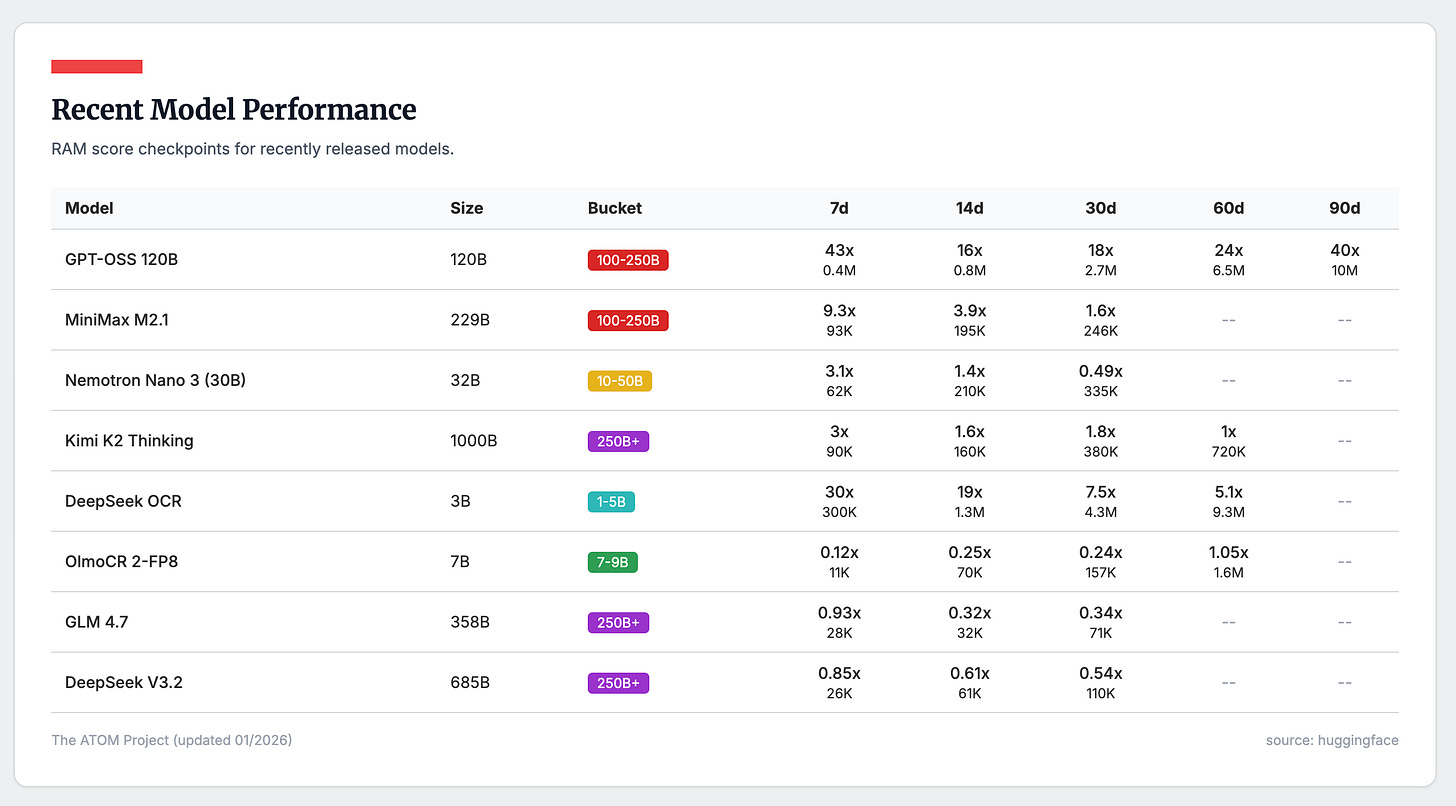

8. The China Stack Quietly Became a Real Alternative

What released

Qwen 3.5, GLM‑5, MiniMax M2.5, ByteDance Seed 2.0 (Lite/Pro), Mercury 2 and LongCat‑Flash‑Lite all landed in late February and early March as serious long‑context, high‑quality models from Chinese and regional players.

Why it matters?

Together they form a viable non‑US model stack:

better support for Asian languages,

more options for local hosting and data residency, and

sharper price competition against US labs.

If you ship globally, this is the moment a “China stack” stops being a research curiosity and becomes part of your vendor shortlist.

9. The Uncensored Local Stack Is Now ‘Good Enough’ for Real Work

What released

March’s local‑LLM update brought roughly 20 new uncensored models, including GLM‑4.7‑Flash‑Uncensored “Heretic” variants, Dark Champion/Darkhn lines, an uncensored Llama 3.2 3B Instruct (with GGUF builds) and Qwen3‑4B “Thinking” uncensored.

Why it matters?

On a 6–24GB GPU, you can now run usable, unfiltered local models with no per‑token bills, perfect for internal tools, edgy experiments and clients who care more about control and sovereignty than PR optics.

That’s the quiet margin story under the frontier model hype.

Your Monday Playbook: what you actually do with this!

Don’t pick “the best model.” Pick three jobs and assign models to each.

1. Heavy reasoning job (analysis / agents / complex calls).

Test GPT‑5.4 vs Gemini 3.1 Pro vs Claude 4.6/4.7 on one real workflow:

a contract review, an incident post‑mortem, or a complex data question. Log cost per run, latency and error rate, not vibes, and crown a winner for this job only.

2. In‑product UX job (search, help, inbox, mobile).

Put Gemini 3.1 Flash‑Lite up against GPT‑5.3‑Instant and one China/local option on a real user flow (search query, help centre, inbox triage).

Pick whichever gives acceptable answers at the lowest cost and latency, then standardize on it for all “AI in the UI” work.

3. Cheap automation job (internal tools, unsexy tasks).

For things like ticket triage, log summarization or rough drafts, test DeepSeek V4, one China model and one uncensored local model on your own infra. Choose the one that is “good enough” at the lowest all‑in cost (API or GPU) and route that entire class of workload to it.

Then do this very boring but important part:» lock this routing in for 6–8 weeks and ignore new launches unless they beat your current choices on cost or reliability, not just on leaderboard screenshots.

Conclusion

If last year was about arguing “Claude vs GPT vs Gemini”, this week is the moment that question stopped being useful.

From here on, the game for anyone who actually ships product is simple:-

Treat GPT‑5.4, Gemini, DeepSeek, Claude, Grok, the China stack and local models as a portfolio, route each workflow to the model that wins on cost, latency, risk and sovereignty, and let everyone else keep fighting about which mascot is smartest.