When Anthropic Says No and OpenAI Says Yes

For months, one AI lab pushed back on mass surveillance and autonomous weapons while most people scrolled past. Now OpenAI is in the room and everyone has an opinion.

The current drama didn’t appear out of thin air last week. The Pentagon–Anthropic fight over AI safeguards has been simmering for months, mostly in the background while the wider public barely glanced at it.

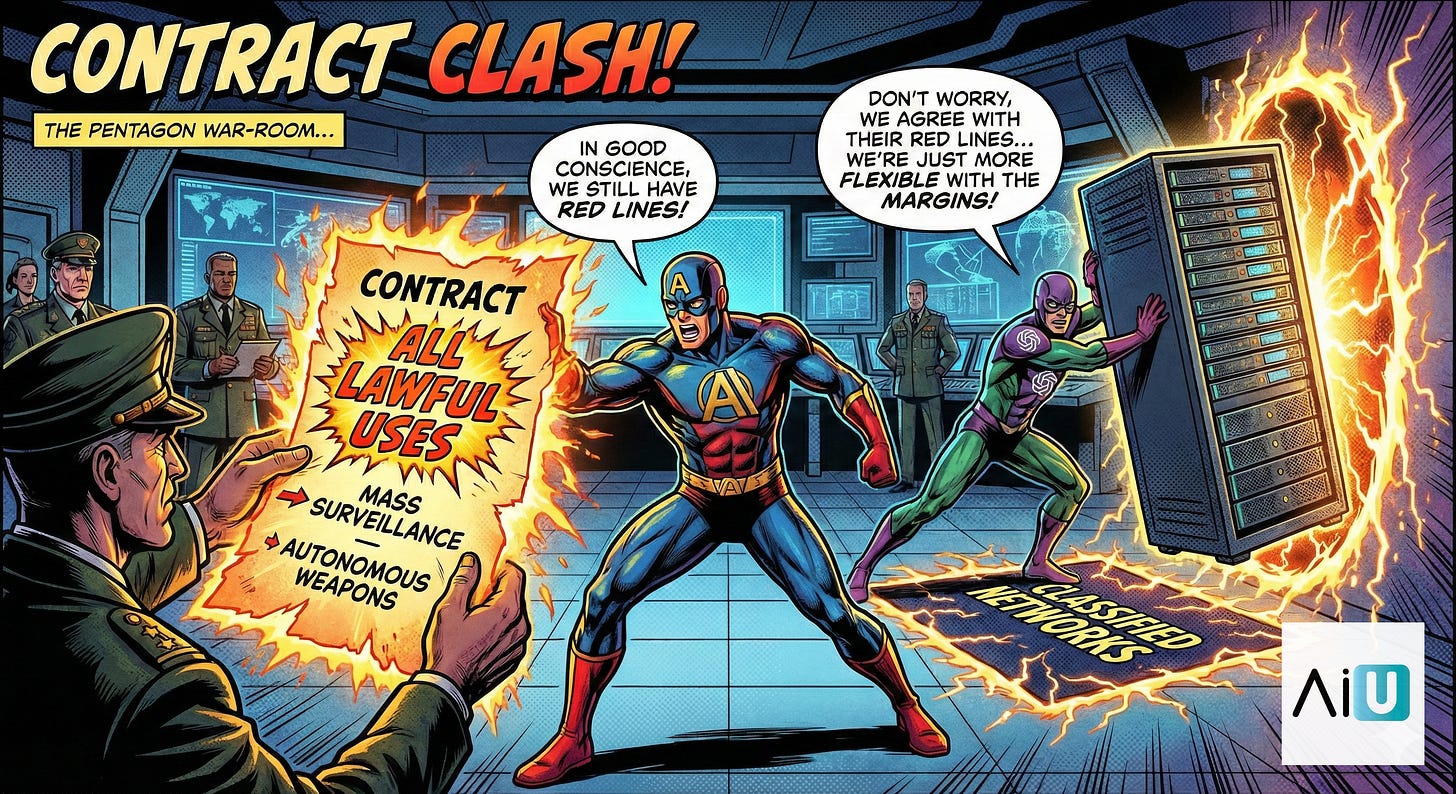

Since late 2025 and early 2026, defense officials have been pushing Anthropic, along with OpenAI, Google and xAI to let the US military use their models for “all lawful purposes,” from weapons development to intelligence and battlefield operations. Anthropic has been the outlier in those talks, insisting on two hard, written guarantees:

no mass surveillance of American citizens, and

no fully autonomous weapons systems.

That dispute hasn’t been secret. Axios, Reuters, The Hill, PBS and others have reported that negotiations dragged on for months, that the Pentagon was “fed up,” and that Anthropic kept saying there was “virtually no progress” because the supposed compromise language still contained loopholes big enough to drive a drone swarm through. But outside the tech and policy bubble, almost nobody was paying attention.

Only when three things happened in rapid succession did it break into mainstream feeds:

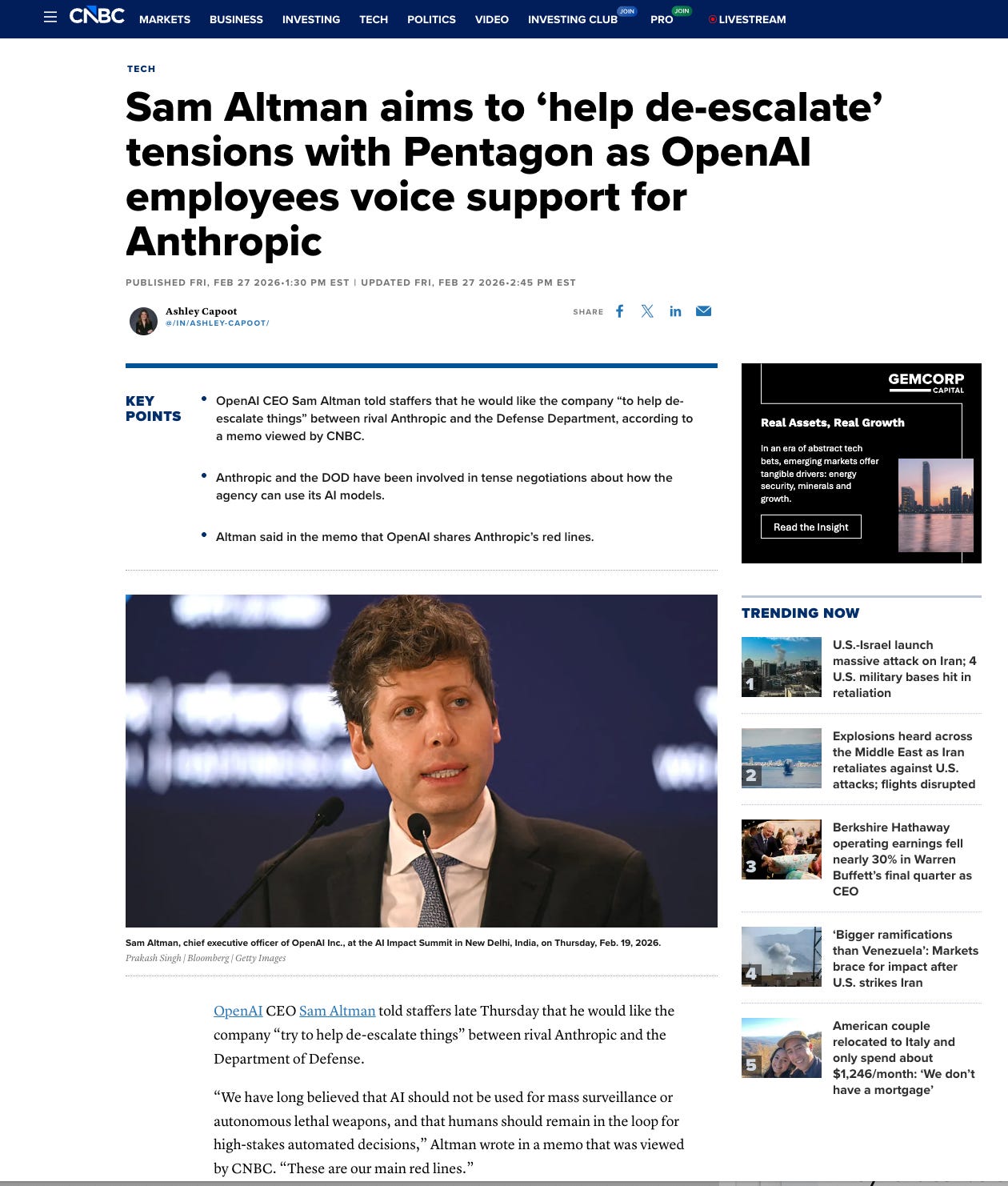

Anthropic publicly said it “cannot in good conscience accede” to the Pentagon’s final offer and was prepared to walk away from a contract worth up to $200M rather than drop its two red lines.

Trump ordered federal agencies to stop using Anthropic and the Pentagon began treating the company as a supply‑chain “risk” after this months‑long standoff.

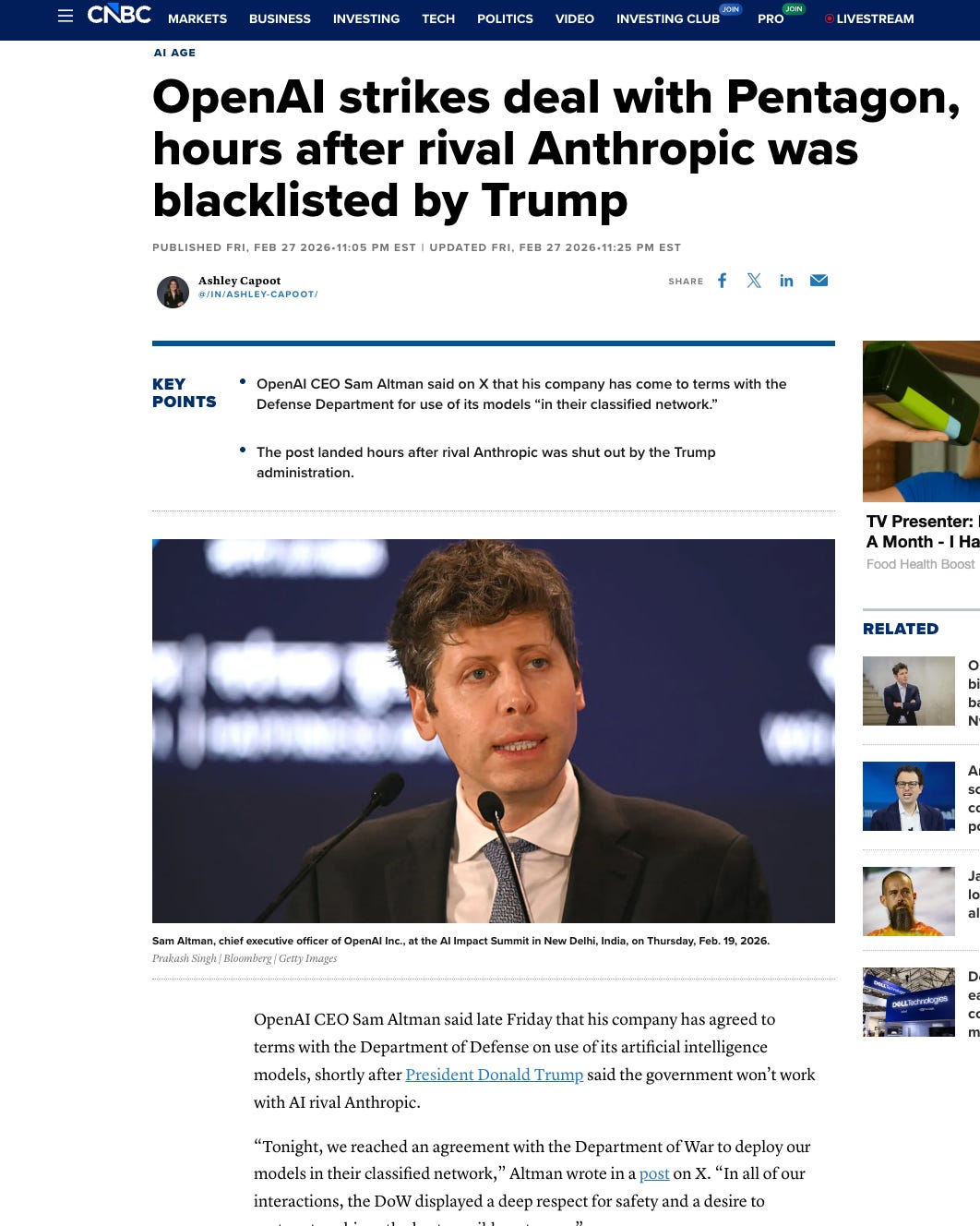

Within hours, OpenAI announced a fresh deal to put its models onto classified Pentagon networks, with Sam Altman saying they share Anthropic’s red lines even as they step into the very space Anthropic just vacated. - Of course this was much more dramatic in the real world like everything that is with @Sama

and then of course just 10 hours later this happens -

10 hours to be precise - 🤔

And no doubt, timelines on twitter / reddit have now been filled up with hot‑takes about secret scripts, stage‑managed outrage and perfectly choreographed outcomes. Now It’s very tempting to jump straight to that conclusion because the sequence is so clean:

a months‑long dispute barely anyone followed suddenly explodes,

one company is punished, another steps in, and

everyone starts talking about “optics.”

A more grounded way to hold it is this: treat the whole episode as a live hypothesis about how power, profit and secrecy interact around military AI, note the red flags, track the incentives, and accept that the real audit will only be possible when more documents, hearings and leaks appear over time. Which I am sure will.

Now there is more to this story then this visible drama of the months‑long feud, the sudden ban, the rival stepping in, and how everyone is reading the optics. But there’s a whole technical layer we haven’t touched yet »

» What “all lawful uses” actually allows,

» What “mass domestic surveillance” and

» What “fully autonomous weapons” mean in code and contracts, and

» Why Anthropic is willing to lose a $200m deal rather than soften those guardrails.

and all these questions needs one deep deep dive. can’t promise as I was planning to look in to MWC Barcelona thats starts monday, but if I can, let me try zoom in on the quiet construction of an AI‑enabled panopticon, not just for Americans, but for “foreign adversaries,” which increasingly means the rest of us. It’ll be on the intelligent founder where all the long essays and podcast live. you can follow and subscribe here.