Everyone’s rushing to deploy agentic AI. Fewer people are thinking about what happens when those agents start handling data they absolutely shouldn’t.

The reality is that the moment you put a chat-based AI agent into production, users will, intentionally or accidentally, paste PII, API keys, credentials, and NSFW content straight into the conversation. Your agent will dutifully process it, log it, maybe even forward it to a third-party API.

Well, Congratulations, you now have a compliance incident.

This isn’t a theoretical problem. It’s happening right now in every organisation experimenting with AI agents.

The good news » you don’t need to build guardrails from scratch. 👍

If you’re running workflows in n8n, there’s a dedicated Guardrails node that can intercept and block problematic inputs before they ever reach your agent. OpenAI offers a Moderation API that flags harmful content categories. And for enterprise teams on AWS, Bedrock has built-in guardrail capabilities that can filter inputs and outputs at the model layer.

[ In this newsletter you get sharp, unfiltered short essays; for full‑length, deep‑dive analysis on AI, subscribe to our companion publication, Intelligent Founder AI. ]

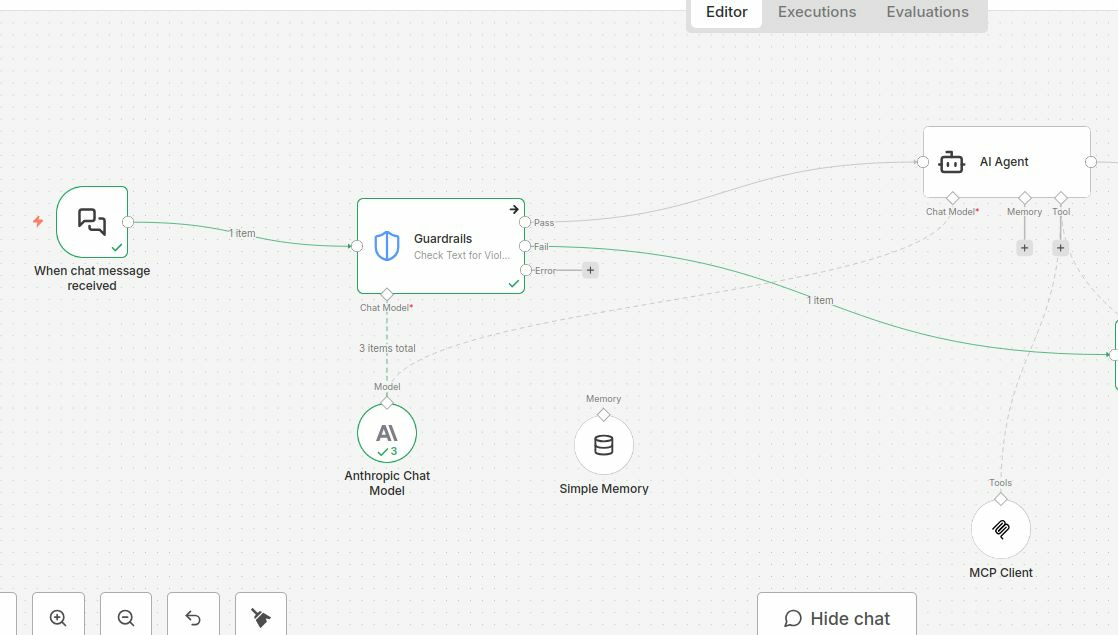

We built a simple workflow (think of it as a conveyor belt for messages) that shows what happens when someone tries to send something dodgy to an AI agent.

Here’s the journey a message takes:

Step 1: User sends a message → Someone types something into the chat. This could be a normal question, or it could contain a credit card number, a password, an API key, or inappropriate content.

Step 2: The Guardrail checks it first » Before the message reaches the AI, it passes through a security checkpoint (the Guardrail node). Think of it like a nightclub bouncer, it inspects every message and decides if it’s safe to let through.

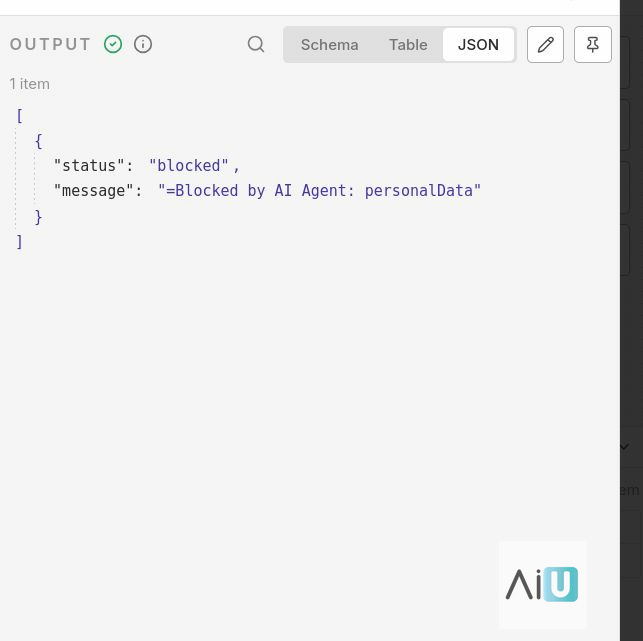

Step 3a: If the message is dodgy → BLOCKED » The guardrail catches it and stops it dead. The user gets told their request can’t be processed. The AI agent never even sees the sensitive data. Crisis averted.

Step 3b: If the message is clean → it goes through » The message passes the check and continues down the line to the AI agent, which processes it normally using tools like memory (so it remembers previous conversations) and web requests (so it can fetch information or take actions).

The key point:

Without this guardrail, every message, including ones containing your company’s secrets or a customer’s personal data — goes straight to the AI model. With it, bad requests get caught at the door.

The key takeaway:

guardrails aren’t optional safety theatre. They’re the difference between a prototype and a production system.

Build them in from day one, not after your first data leak.